To date, most implementations of AI in applications using GPT Large Language Models (LLMs) rely on calling the OpenAI API, which surprisingly, contrary to what its name might suggest, is not open-source. This pattern has become so common that a term referring to AI apps based upon OpenAI’s models has become popularized in Silicon Valley — the “OpenAI wrapper.”

GPT-1 and GPT-2’s code continue to be available to researchers & developers on Github. But beginning with GPT-3, OpenAI decided to continue development with a closed-source model. Even though much of the training data for an LLM is gathered from the open Internet, the datasets that OpenAI uses to train their latest models are considered a proprietary secret. This makes it difficult to root out sources of bias or misinformation, which could lead to undesirable or even unsafe AI behavior.

OpenAI’s founder, Sam Altman, went so far as to lobby Congress to require an “AI license” from a U.S. government agency to develop new AI models, and the registration of models prior to releasing them. If such provisions were to become law, it would massively advantage large corporations such as OpenAI, Microsoft (backer of OpenAI), and Google. Unlike open source communities & independent developers, well-financed firms can easily afford to comply with the “red tape” of laws which they likely will have a hand in writing.

Along a similar thread, the EU is considering requiring general purpose AI and GPT models to register in an EU database and comply with a litany of requirements written by bureaucrats. These restrictions will undoubtedly stifle competition, and put a chilling effect on AI innovation in any jurisdictions that adopt them.

To better understand the importance of open-source LLM models, why they should continue to be freely developed, and why you should consider using them for your applications — let’s dive into some of their benefits.

Benefits of Open LLM models vs. AI-as-a-Service (AIaaS)

It is true that GPT-3.5 and GPT-4 are currently more advanced than open-source models, which can roughly match the reasoning capabilities of GPT-3. However, for extending and developing many AI-based applications, an open LLM model with a GPT-3 level of performance is still practically very useful. This is especially true when considering that local LLM models:

- Can be deployed on commodity infrastructure (locally, on-premises, in the cloud)

- After training, make inferences using CPUs without the need for GPUs

- Run in-memory with 8 GB RAM vs. hundreds of GBs of VRAM

- Can operate even without an Internet connection

- Protect users’ privacy – they don’t send prompts to a remote API

- Run uncensored models without the limitations of commercial services

- Avoid blanket, country-wide bans imposed by services like ChatGPT

- Incur no per-token API costs, unlike with AI-as-a-Service (AIaaS)

- Ingest, process, and enable Q&A with private, internal documents

It’s also worth bearing in mind that open LLMs are constantly becoming more capable, with 40B parameter models like Falcon-40B-Instruct becoming available and topping the leaderboards when benchmarked against a set of common GPT prompts. Large companies like Meta and Google, who are relative “laggards” in the AI “arms race” (compared to Microsoft) are increasingly throwing their weight behind open-source AI models and AI tooling.

With “mercenary” AI researchers leaving for the greater resources that frontrunners like OpenAI can offer in the short-term, supporting the open-source AI ecosystem may be the most effective way for the other tech companies to compete. By working with us to deploy your own open-source AI infrastructure, you benefit from the “pluggable” nature of the models. As the open-source AI models rapidly develop, you can download the new-and-improved GGML models in .bin format to your LocalAI infrastructure, so that your applications become smarter too.

Applications Enabled by Local AI using Open-Source LLMs

Edge AI Applications

Local LLM models are also ideal for edge AI applications where processing needs to happen on a users’ local device, including mobile devices which are increasingly shipping with AI processing units, or consumer laptops like Apple’s Macbook Air M1 and M2 devices.

Compared to the 175 billion parameters used by ChatGPT’s GPT-3.5, most open LLMs are trained with 6-7B parameters, so they are much lighter weight in their requirements for system resources. Also, open LLMs are able to make inferences based on prompts with only CPUs (no GPUs), considerably reducing their operating cost and expanding the possible environments where they can be run or hosted.

Generative AI Applications

Local LLMs can be leveraged for generative AI applications such as text & image generation, similar to OpenAI’s ChatGPT and Dall-E. Many open-source LLMs permit their output to be used for commercial purposes, making them ideal for increasing employee productivity without the privacy risks of proprietary tools like Microsoft 365 Copilot. Compared to the token-based pricing model of AI-as-a-Service, the costs associated with using a locally hosted AI are more predictable as they are based on the number of LocalAI servers you deploy.

Private AI Applications

Private AI applications are also a huge area of potential for local LLM models, as implementations of open LLMs like LocalAI and GPT4All do not rely on sending prompts to an external provider such as OpenAI.

You can even ingest structured or unstructured data stored on your local network, and make it searchable using tools such as PrivateGPT. Note that this does not require you to train or fine-tune a model yourself, which can require days or weeks of costly GPU instances from a provider like AWS. You can simply use any pre-trained GPT model of your choice, but use it to ask questions against the custom documents that you specify. The potential use cases include academic & scientific research, corporate training & development, customer support, sales & service, and much more.

All the prompts and documents remain on your own infrastructure, which can be on-premises, a datacenter, or cloud provider of your choice.

Requirements for Locally Hosted AI using LocalAI

Below are general requirements – the specific technical requirements for running open LLM models locally, depends on the number of max tokens each request will generate, and the number of simultaneous requests. It also depends if you want to load more than one model into memory, if your application provides the possibility of interacting with multiple models.

For a specific estimation of what your AI workload might require, feel free to contact our team of self-hosted AI consultants.

Minimum requirements for running open-source LLM with LocalAI (per node)

- 4 Physical CPU Cores

- 8 GB RAM

- 3 – 8GB Storage per 6B/7B Parameter Model

Recommended requirements for running open-source LLM with LocalAI (per node)

- 6 Dedicated CPU Cores with AVX/AVX2 Support

- 16 GB RAM

- 3 – 8GB SSD Storage per 6B/7B Parameter Model

Risks of Relying on Third-Party AI-as-a-Service APIs

If you are a start-up or internal team considering building an AI-enabled application based on an LLM, you should seriously consider the business risks of relying on a third-party API. Like many third-party Reddit clients learnt the hard way recently, a third-party API can change its pricing model with no recourse to you. As an area where regulation is regularly changing, relying on OpenAI or other AIaaS providers is especially risky, as your AI service could suddenly stop serving your country for legal reasons. Citing privacy concerns, Italy’s data-protection authority banned ChatGPT for the entire country in April 2023. Other countries, or entire blocs like the EU, could do the same.

By using a locally hosted LLM on your own infrastructure, you can minimize the risk of blanket bans that might affect your hosting provider’s location, the country where you do business, and the location of your end users — giving you time to react to new regulations.

Even though OpenAI prohibits users of its API from using it to develop models which can compete against OpenAI itself, their Terms of Use does not prohibit OpenAI from using the telemetry it gathers from your users’ prompts to determine new business opportunities for itself. If your OpenAI-based application is successful enough, they could theoretically even build an AI product to compete with yours, and cut off your access to their API without stating a reason.

Locally Hosted AI Alternatives to AIaaS

LocalAI, GPT4All, and PrivateGPT are among the leading open-source projects (based on stars on Github and upvotes on Product Hunt) that enable real-world applications of popular pre-trained, open LLM models like GPT-J and MPT-7B (permitted for commercial use) listed on Hugging Face, a repository of open LLM models.

The LLaMA 2 model is open-source, and unlike its predecessor, LLaMA, it is licensed freely for commercial use (not only research and non-commercial use).

LocalAI provides an OpenAI-compatible API endpoint, for developing new AI-enabled applications using the OpenAI programming libraries, or extending virtually any app which already integrates with OpenAI.

For production-scale applications, LocalAI can be deployed with scale-out replicas orchestrated by Docker or Kubernetes. The OpenAI-compatible API is an HTTP endpoint that can be load balanced behind a reverse proxy such as NGINX.

Our Self-hosted AI Consultants provide consulting & implementation services including:

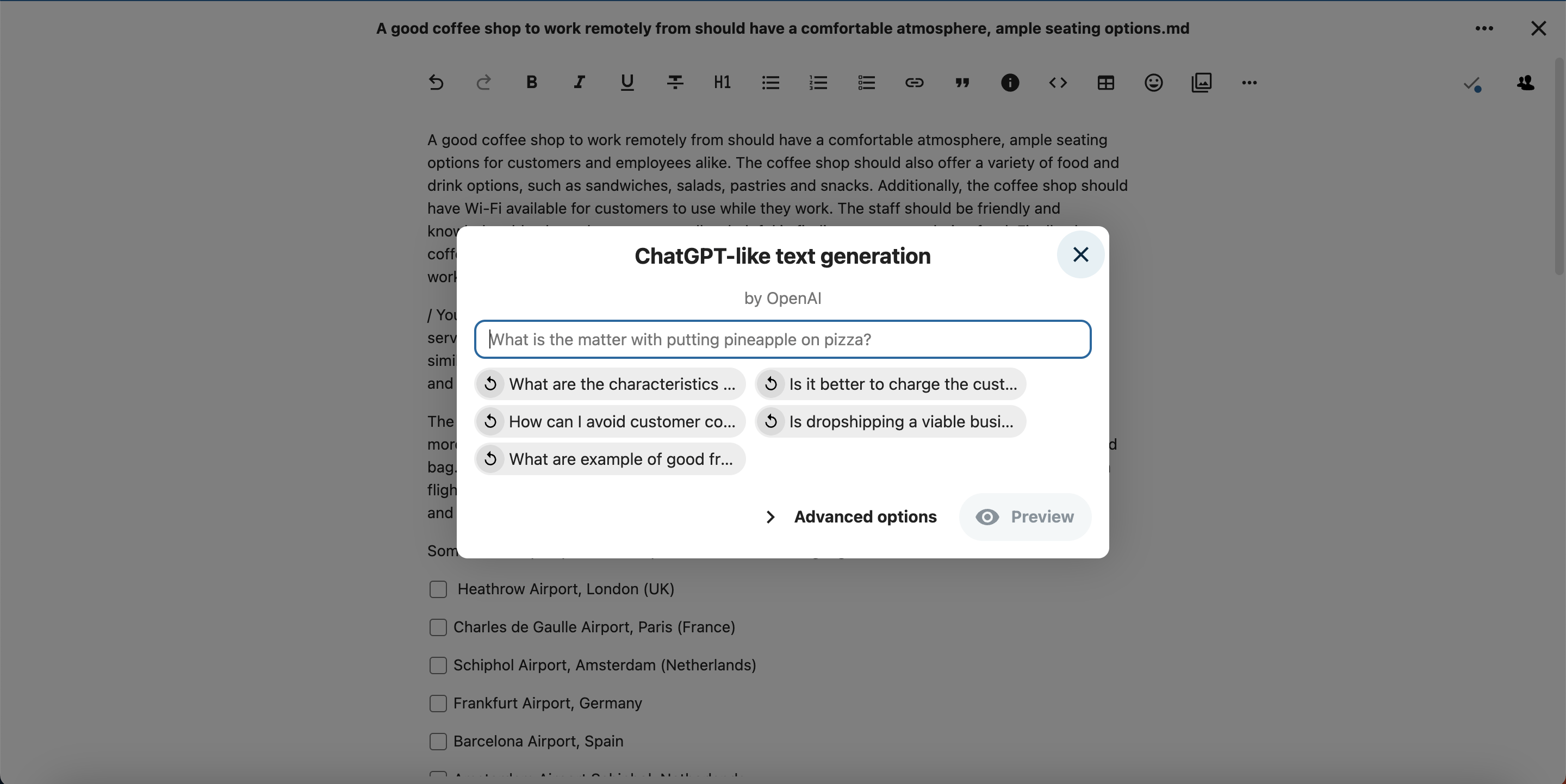

- Integrating LocalAI with existing apps such as Nextcloud Text, Notes, Photos

- GPT-like Text Generation

- Stable Diffusion Image Generation

- AI Text-to-Speech

- TensorFlow Image Classification

- Using LocalAI as an OpenAI-compatible API for your AI application

- Architecture design for load-balancing & operating a robust LocalAI service

- Selecting an appropriate open-source LLM for your application

- Fine-tuning (training) an existing AI model for your application

- Ingesting custom documents into PrivateGPT, and making them searchable

With our extensive experience as infrastructure architects & cloud consultants, the Autoize team can help your business leverage open-source AI models as an alternative to “Big Tech” AI services such as the OpenAI API, Azure OpenAI Service, Vertex AI PaLM API, and Amazon Bedrock.

Contact our self-hosted AI consultants for a conversation (with a human, not GPT!) about implementing locally hosted AI.