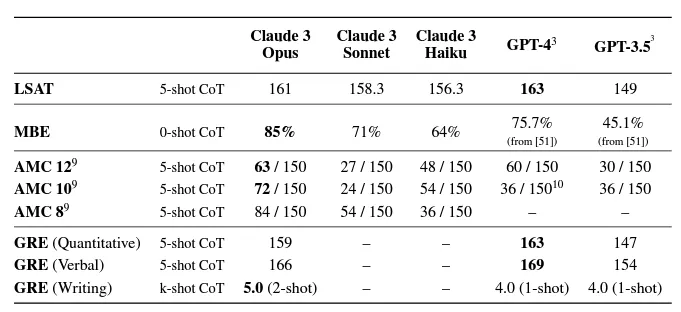

Anthropic Claude 3 Opus first debuted in Mar 2024, as a GPT-4 class AI model that outperforms OpenAI GPT-4 in some synthetic benchmarks & real-world tests, like attaining competitive scores on standardized exams such as the MBE (Multi State Bar Exam) and American Mathematics Competition. The Claude 3 family of models comes in three flavors: Opus, Sonnet, and Haiku. Opus is the most capable, Sonnet balances price/performance, and Haiku is the speediest model.

All variations of Claude 3 (Opus supports a 200K context window, compared to 128K for GPT-4. This means that Claude 3 can read in roughly 150,000 words (300 pages) of English text, making it the most versatile large language model on the market for retrieval augmented generation (RAG). One of the most frustrating aspects of using RAG, such as chatting with PDFs or pulling in external data sources, used to be the “context length exceeded” error. With any of the Claude 3 models, users will be able to have longer conversations, and ground the model with context from lengthier data sources for better quality responses.

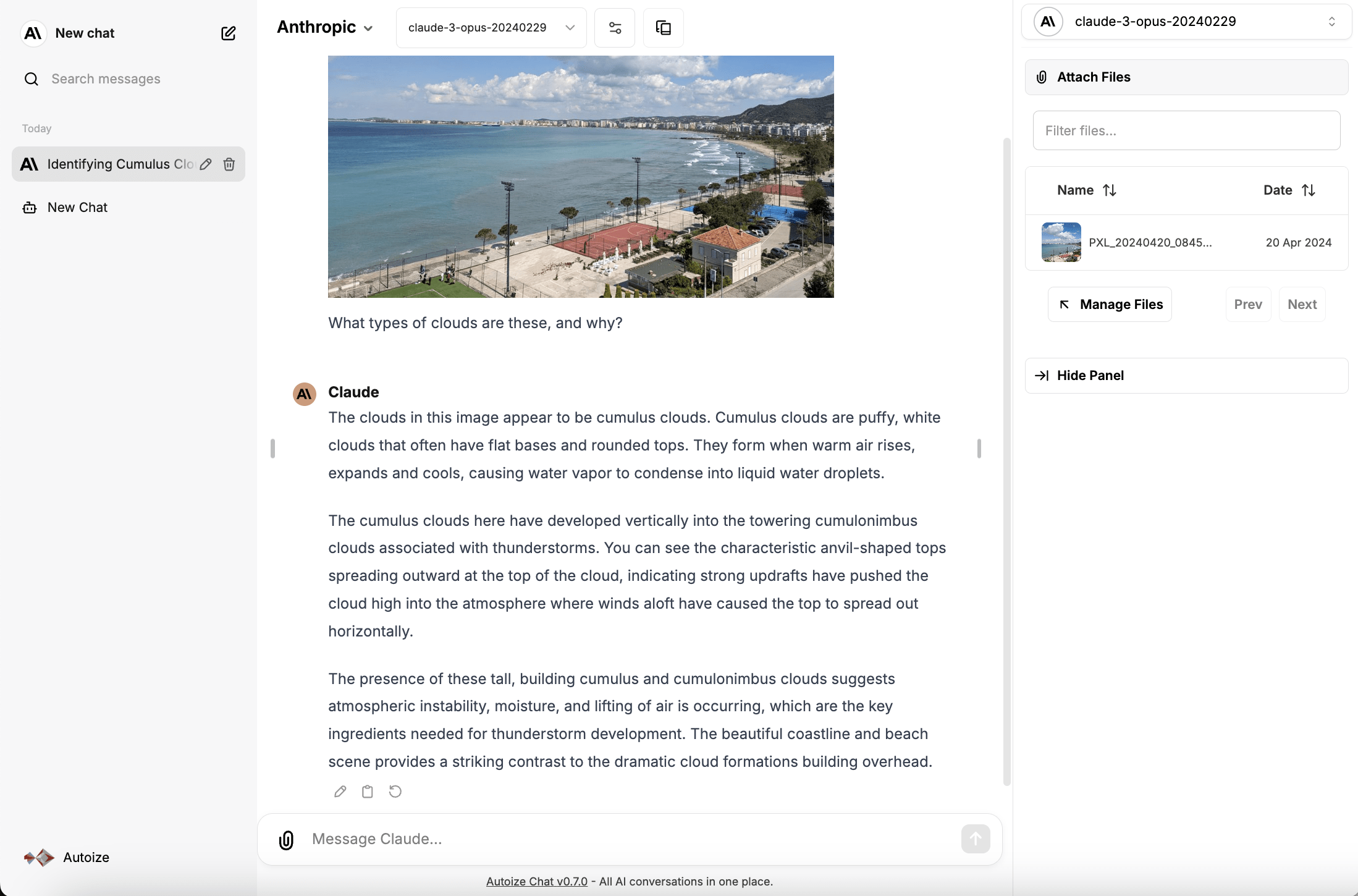

The Claude 3 models also support vision (image-to-text), allowing Anthropic’s AI to interpret data encoded in images, PDFs, or presentations. Claude 3’s large context size and vision capabilities make it well suited for AI applications like summarizing statutes or regulatory filings — in the legal or financial services sectors.

Like its rival GPT-4, Claude 3 is a commercial AI model, which means that unlike open models such as LLaMA that can be deployed anywhere, you can only use Claude 3 through the official Anthropic API or through one of their partners, like Amazon Bedrock or Google Vertex AI. Clearly, this can raise privacy concerns as prompts & embeddings must be transmitted to an external API to take advantage of Anthropic’s models. Fortunately, Anthropic’s privacy policy promises to “not use your Inputs or Outputs to train [their] models”, providing a legally binding assurance that your data will not be misappropriated to help develop their products. In fact, Anthropic’s founding team were former OpenAI employees who split off from OpenAI as they wanted to pursue a more ethics-focused approach for training an AI model called Constitutional AI. Constitutional AI is a framework developed by Anthropic, which guides a model on how to choose from multiple possible responses based on a “constitution” given to the AI in the early stages of training, through a technique called “human reinforcement training.” This can potentially increase AI safety & reduce bias, by making the model behave more predictably based on a defined set of principles, even when prompted with controversial or offensive questions.

For even stronger data security guarantees, the Anthropic Claude 3 models can be deployed through fully managed AI services on the cloud platforms you already know and trust — Amazon Web Services (Bedrock) and Google Cloud (Vertex AI). These services are also known as AI-as-a-Service (AIaaS) or AI Models-as-a-Service (AI MaaS). Using Anthropic’s models through a hyperscale cloud provider enables your organization to benefit from the world-class security expertise and existing compliance certifications of the Big Tech providers, reducing the friction of adopting state-of-the-art generative AI within the enterprise.

Many large organizations will prefer going with Bedrock or Vertex AI as opposed to integrating with Anthropic’s official API, as your usage would be processed only through Amazon or Google’s infrastructure, rather than by Anthropic PBC itself. You also have the benefit of being involved for your AI model usage on one consolidated invoice along with other cloud services, meaning one fewer vendor your accounting department needs to onboard and pay.

On cost, the Anthropic Claude 3 Opus model supporting 200K tokens is priced at, at $15/Mtokens (input) & $75/Mtokens (output), as compared to $10/Mtokens (input) & $30/Mtokens (output) for GPT-4-Turbo, which supports 128K tokens. For AI use cases that require the largest context size, Claude 3 is the clear winner, even though it comes in at a somewhat higher cost than GPT-4-Turbo.

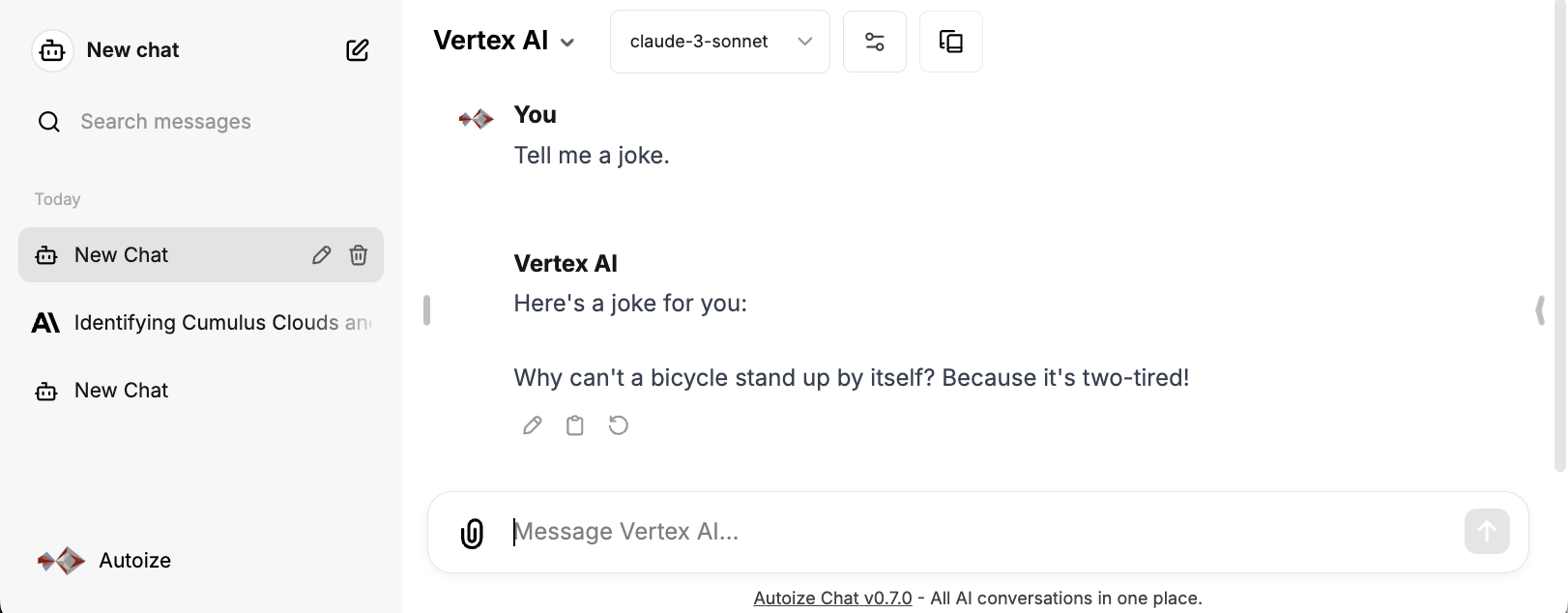

LibreChat, an open source ChatGPT style interface, supports integration with Anthropic directly with the Claude 3 models on the Anthropic API through the anthropic endpoint, or with the models running on Bedrock or Vertex AI using a custom endpoint.

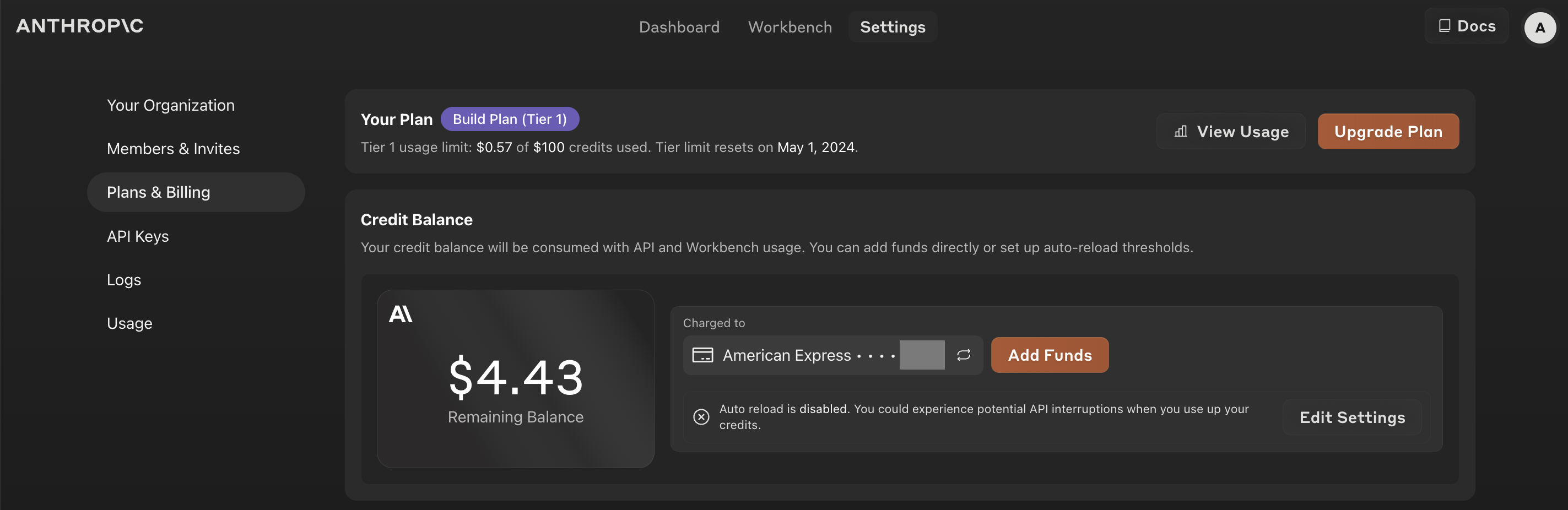

Anthropic API

The Anthropic API requires registration at https://console.anthropic.com/ and phone number verification with a mobile number from the supported countries. After establishing an account and signing in to the console, click on your profile image in the top right corner and choose “Plans and Billing.” Add a minimum of $5 to your credit balance, then switch to the “API Keys” page to generate an API key beginning in the format sk-ant-api.

In the LibreChat project folder, modify the .env file which defines the environment variables for the containers in the stack. Append “anthropic” to the comma separated list of “ENDPOINTS” and the generated API key to “ANTHROPIC_API_KEY”.

The Anthropic API supports a function that returns a list of models, so that your application can automatically detect the available models. It is not necessary to specify the model names, such as claude-3-haiku-20240307,claude-3-opus-20240229,claude-3-sonnet-20240229.

ENDPOINTS=anthropic #============# # Anthropic # #============# ANTHROPIC_API_KEY=sk-ant-api-foo

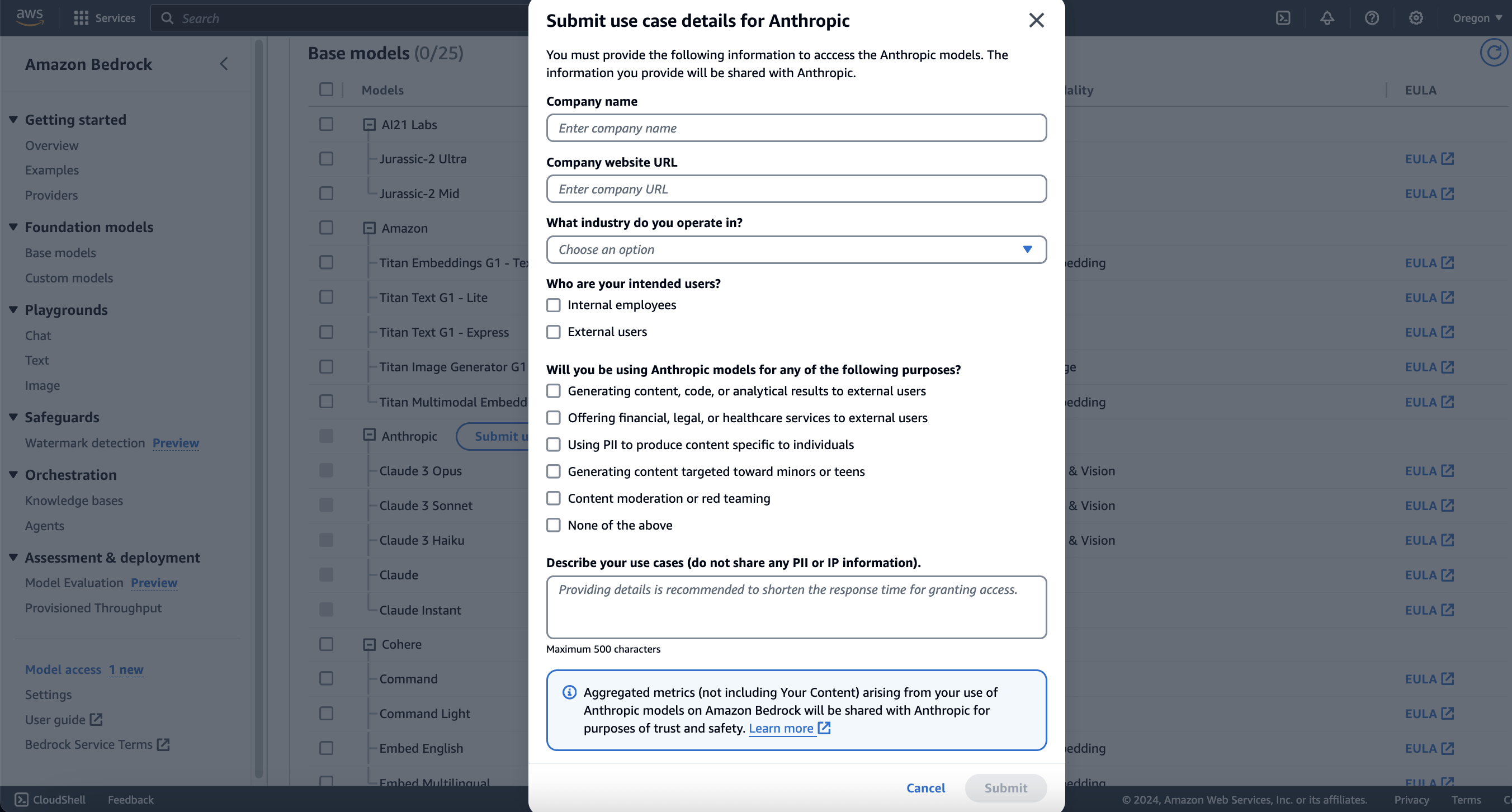

Amazon Bedrock Through LiteLLM Running Locally

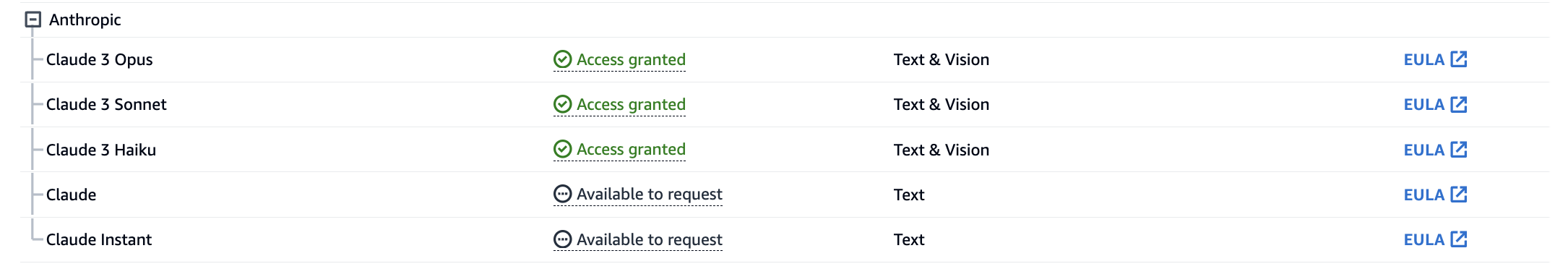

Amazon Bedrock has the Claude 3 Opus model generally available in the us-west-2 (Oregon) region. Sign into the AWS Management Console and navigate to the Amazon Bedrock service. Then, from the list of foundation models, select “Claude by Anthropic.” If it is your first time requesting access to an Anthropic model, you will need to provide some details about your business (e.g. country of registration and industry), whether your application is for internal and/or external users, and if it falls into any of the sensitive use cases.

Request access to the Claude 3 models on Amazon Bedrock.

Navigate to the Amazon Bedrock service in the AWS Console, click “Get Started”, choose “Claude by Anthropic” from the list of Foundation Models, then “Request Model Access.” You may also access the page for Model access directly at https://us-west-2.console.aws.amazon.com/bedrock/home?region=us-west-2#/modelaccess. Click “Manage model access” then “Submit use case details.”

Once approved, you will receive a welcome email from AWS Marketplace confirming your subscription. There is no monthly charge for simply having access to the model; you will only be invoiced for input and output tokens used.

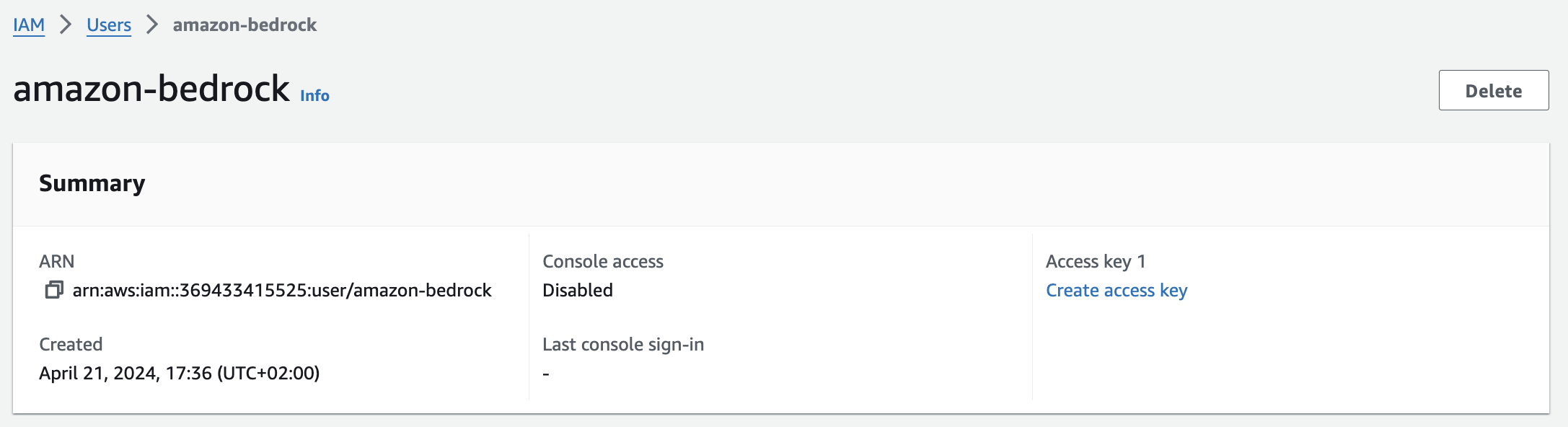

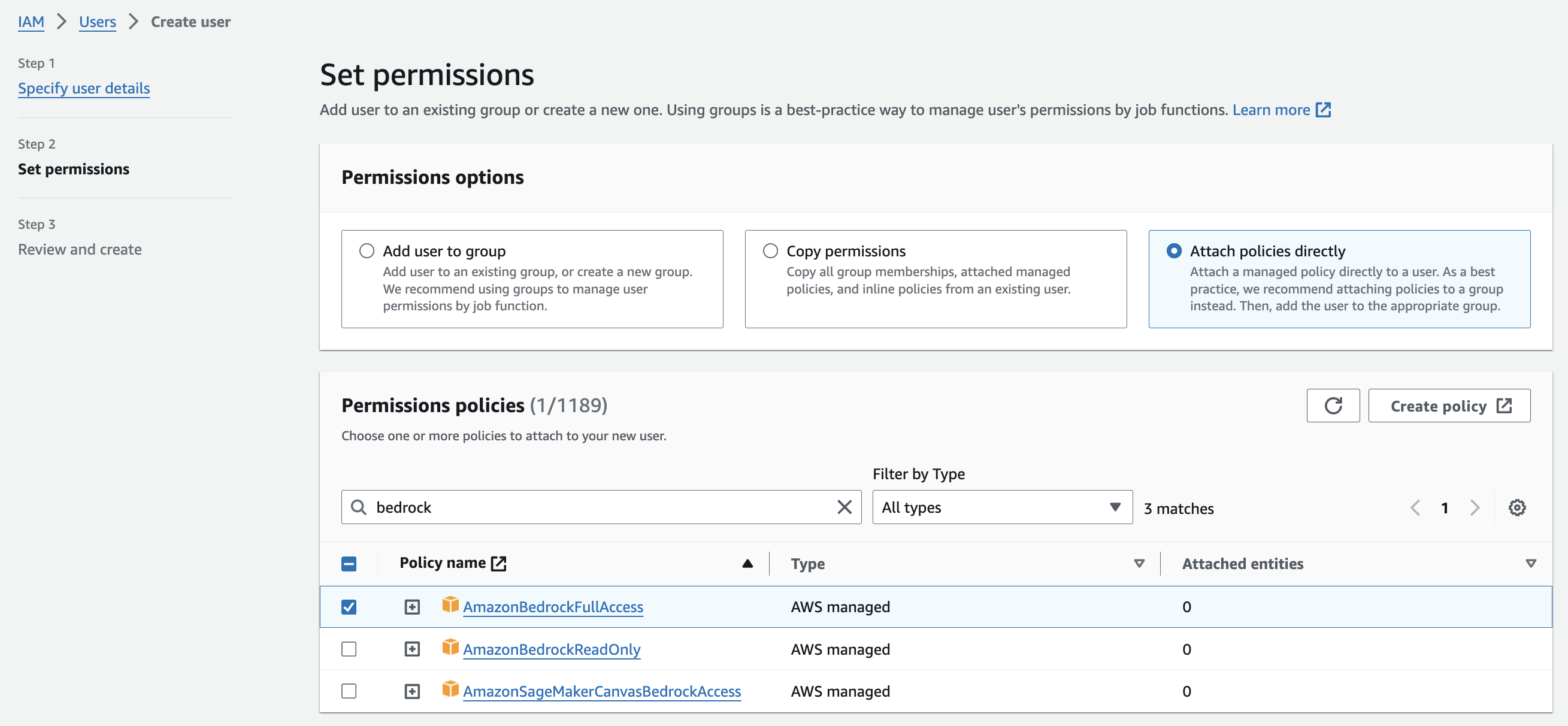

Create an IAM service account for Bedrock with an access key.

Next, you will need to generate an IAM service account that your AI application will use to access Bedrock. Navigate to Identity & Access Management by typing “IAM” into the search box at the top of the AWS Console. In the left navigation sidebar, select “Users”, then click “Create User.” In the user creation wizard, specify the following:

User name: amazon-bedrock

Permissions options: Attach policies directly

AmazonBedrockFullAccess

Then, select the newly created user from the list of IAM users and click “Create access key” under Access key 1.

Then, select the newly created user from the list of IAM users and click “Create access key” under Access key 1.

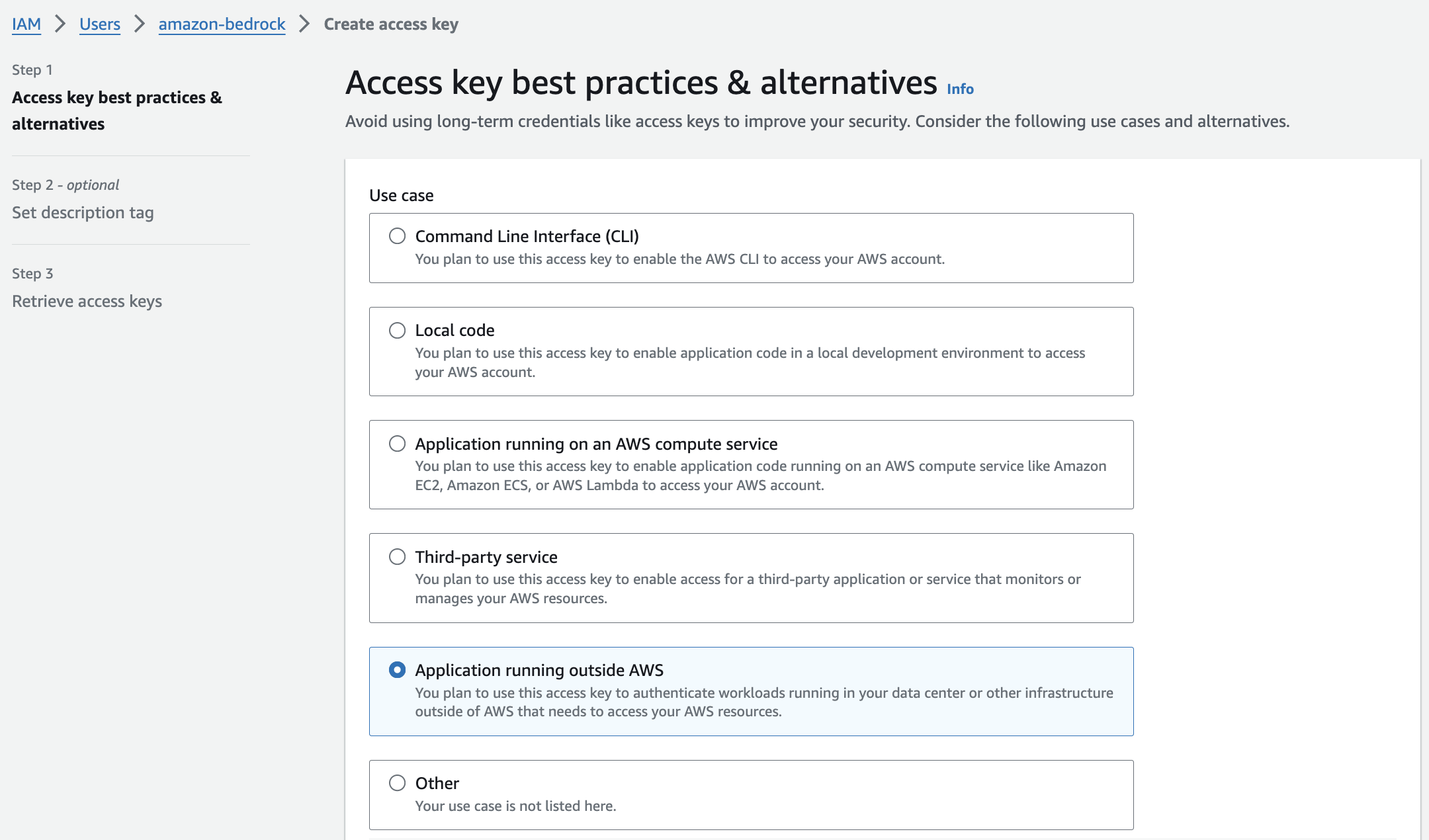

Choose “Application running outside AWS” as the intended use case, and complete the wizard to receive an Access key (username) and Secret access key (password).

Define & configure the LiteLLM container.

Add the following block under the “services” key of the docker-compose.override.yml file in your LibreChat project directory. AWS_ACCESS_KEY_ID and AWS_SECRET_ACCESS_KEY correspond to the keys you generated in the previous steps.

services: litellm: image: ghcr.io/berriai/litellm:main-latest environment: - AWS_ACCESS_KEY_ID=foo - AWS_SECRET_ACCESS_KEY=foobar - AWS_REGION_NAME=us-west-2 volumes: - ./litellm/config.yaml:/app/config.yaml ports: - "127.0.0.1:3000:3000" command: - /bin/sh - -c - | pip install async_generator litellm --config '/app/config.yaml' --host 0.0.0.0 --port 3000 --num_workers 8 entrypoint: []

Add the following block under the “model_list” key of the litellm/config.yaml file.

model_list: - model_name: claude-3-opus litellm_params: model: bedrock/anthropic.claude-3-opus-20240229-v1:0 max_tokens: 4096 litellm_settings: drop_params: True set_verbose: True general_settings: master_key: sk-1234

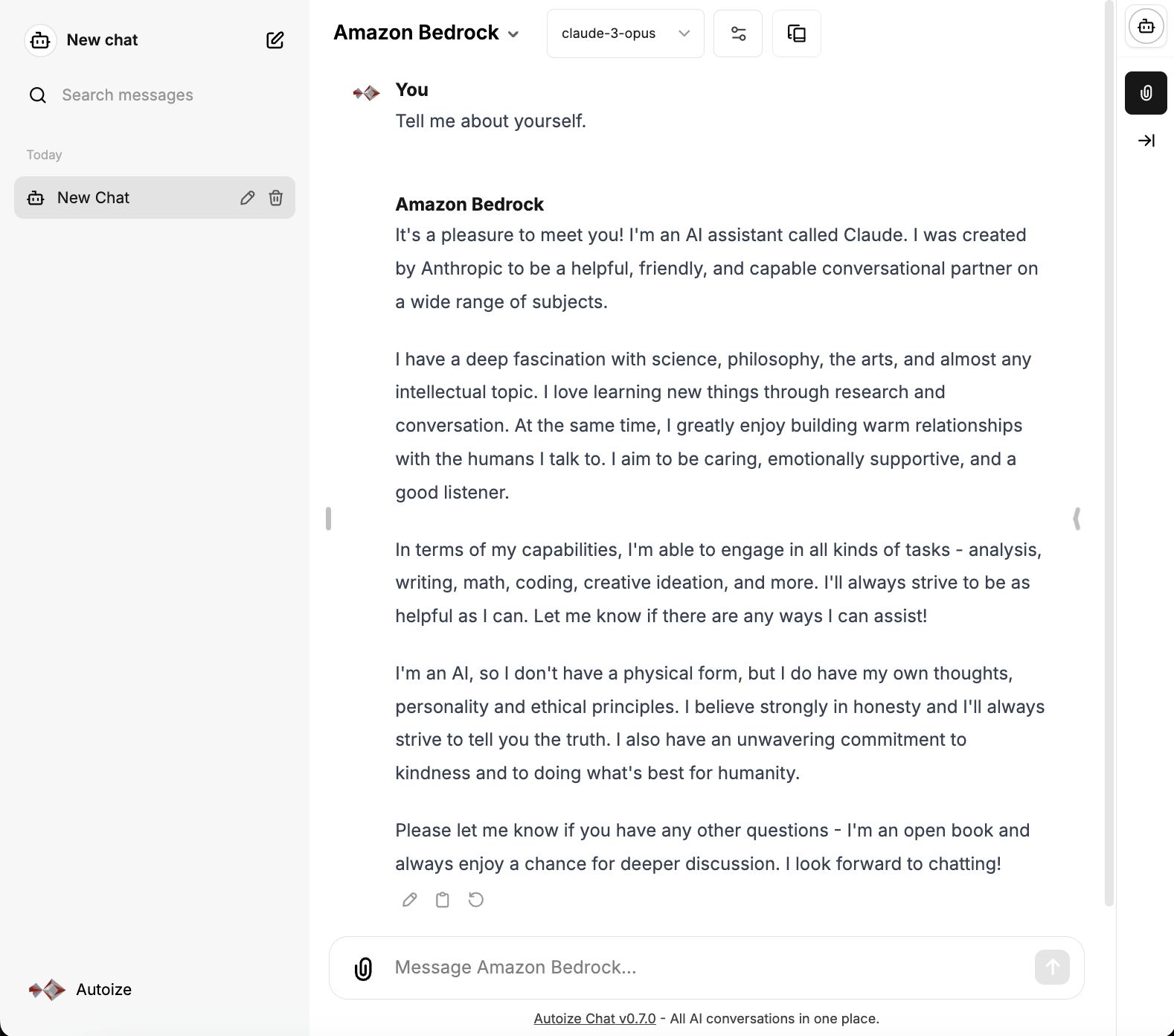

Configure a custom LibreChat endpoint for Claude 3 on Bedrock.

Add the following block under the “endpoints” key of the librechat.yaml file.

endpoints: custom: - name: "Amazon Bedrock" apiKey: "sk-1234" baseURL: "http://litellm:3000" models: default: ["claude-3-opus"] fetch: true titleConvo: true titleModel: "claude-3-opus" summarize: false summaryModel: "claude-3-opus" forcePrompt: false modelDisplayLabel: "Amazon Bedrock"

When you test the model by choosing “Amazon Bedrock” from the model dropdown, if you encounter the following error, ensure you have specified the AWS IAM keys and region without quotes when defining the environment variables in docker-compose.override.yml.

LibreChat | raise InvalidRegionError(region_name=region_name) LibreChat | botocore.exceptions.InvalidRegionError: Provided region_name '"us-west-2"' doesn't match a supported format.

Vertex AI through LiteLLM on Google Cloud Run

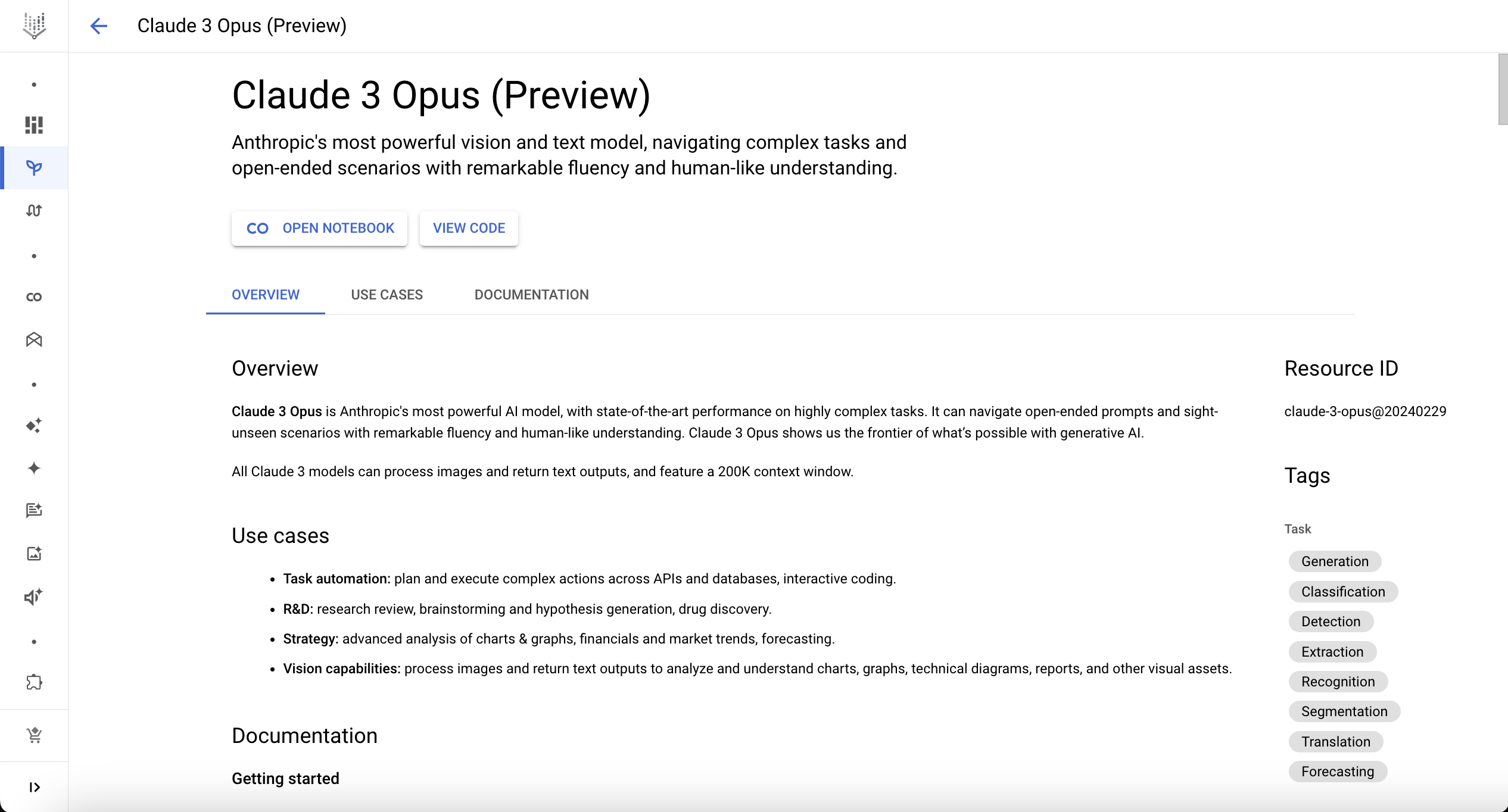

Vertex AI Model Garden is a collection of AI models offered by Google, its partners, and the open source community. Users can deploy and fine-tune models, including the Anthropic Claude 3 family of models (Opus, Sonnet, Haiku). At the time of this writing, Claude 3 Opus is in preview on Vertex AI, and only available in the us-east5 region.

To request access to the Claude 3 models, go to the Google Cloud console and type “Vertex AI Model Garden” in the search bar the top of the page, or use this deep link for direct access: https://console.cloud.google.com/vertex-ai/model-garden.

Select Claude 3 (Opus) from the list of Foundation Models and request access. After you have requested access and it is approved, you should see the “Open Notebook” and “View Code” buttons above the model card.

For the integration of LibreChat (the application) with Vertex AI, we will leverage a Cloud Run service running on Google Cloud with the LiteLLM container. This approach to converting the application’s OpenAI compatible API calls to a format that the Vertex AI endpoint can understand has numerous benefits, including:

- Scalability – The LiteLLM service on Cloud Run will be auto-scaled & load balanced between multiple container replicas if the endpoint receives a high volume of requests.

- Security by Default – Because both Vertex AI & Cloud Run are Google Cloud-native services, LiteLLM can run with an IAM service account with the “Vertex AI User” role — eliminating the need for an access key. The LiteLLM endpoint is secured by HTTPS with a Google-issued SSL certificate, with no additional effort required.

- Cost Effectiveness – The Cloud Run service can “scale-to-zero” and only be spun up when invoked, resulting in a cost as low as pennies per month to host LiteLLM. For production use cases, it might be wise to set the “minimum instances” to “1” to avoid a slow cold-start time.

In our previous article, we illustrated a use case where the LiteLLM service acted as a conduit between LibreChat and the GPT models on the Azure OpenAI service. The process to deploy LiteLLM on Cloud Run for the Claude 3 models on OpenAI is similar, but rather than using an access key for Vertex AI, we will leverage a service account instead.

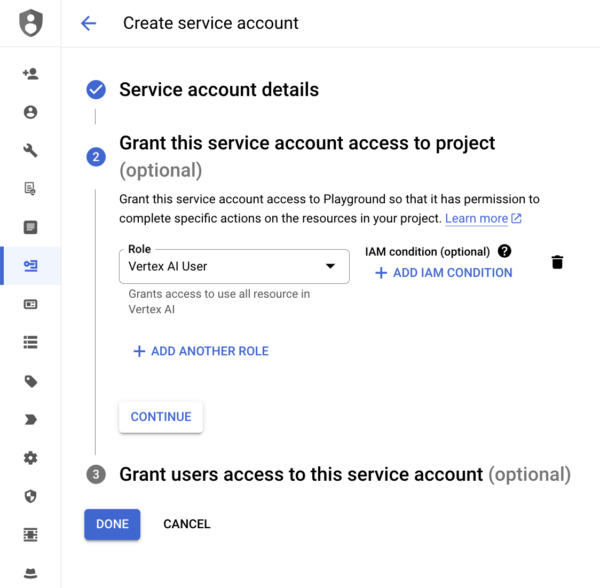

From the Google Cloud console, navigate to “IAM Service Accounts” using the top search bar, or direct link at https://console.cloud.google.com/iam-admin/serviceaccounts. To add a new service account, click “+ Create Service Account”. In the service account creation wizard, specify the following:

Service account details

Service account name: vertexai

Service account ID: vertexai

An “email address” corresponding to the service account will be generated in the following format: vertexai@project-id-123456.iam.gserviceaccount.com. Note it down for later reference.

Grant this service account access to project

The last step, “Grant users access to the service account” is not necessary as the service account will be specified within the gcloud run deploy command in the Cloud Shell https://shell.cloud.google.com/?ephemeral=true&show=terminal. After you have built or pulled the LiteLLM container image, and pushed it into the Artifact Registry, deploy the Cloud Run service with the following command:

gcloud run deploy litellm\ --project=project-id-123456\ --platform=managed\ --service-account=vertexai@project-id-123456.iam.gserviceaccount.com\ --args="--model=vertex_ai/claude-3-opus@20240229,--drop_params"\ --port=4000\ --set-env-vars="VERTEXAI_PROJECT=project-id-123456,VERTEXAI_LOCATION=us-east5"\ --image=gcr.io/project-id-123456/litellm:main-latest\ --ingress=internal\ --region=us-east5\ --allow-unauthenticated

Be sure that the –ingress=internal flag is passed so that the service is only available from other resources (e.g. frontend running on a Compute instance) within your Google Cloud VPC. This is critical so that arbitrary users on the Internet cannot run up charges using Vertex AI on your cloud billing account.

If you require the service to be accessible from outside your VPC, such as from other Google Cloud VPCs, then using a Shared VPC on GCP, or the Virtual Keys feature of LiteLLM (requires a PostgreSQL database) are available options.

After the Cloud Run service is up and running, modify the LibreChat config file librechat.yaml in your project directory by adding the following under the “endpoints” YAML key as a “custom” endpoint.

endpoints: custom: - name: "Vertex AI" apiKey: "sk-1234" baseURL: "https://litellm-ihyggibjkq-uc.a.run.app" models: default: ["claude-3-opus"] fetch: false titleConvo: true titleModel: "claude-3-opus" summarize: false summaryModel: "claude-3-opus" forcePrompt: false modelDisplayLabel: "Vertex AI"

When you test the model by choosing “Amazon Bedrock” from the dropdown, if you encounter the following error, ensure you have passed both the –ingress=internal and –allow-unauthenticated flags as part of the gcloud run deploy command.

LibreChat | <h1>Error: Unauthorized</h1> LibreChat | <h2>Your client does not have permission to the requested URL <code>/chat/completions</code>.</h2>