If you have ever used ChatGPT Plus, OpenAI’s SaaS GenAI offering, you are likely familiar with the Browsing extension which retrieves current information from the Internet using Bing search to inform the GPT model’s response to the user’s prompts. One of the major weaknesses of using an AI large language model without a RAG plugin that can retrieve real-time data is the “knowledge cutoff” date. Without being able to retrieve external data sources, an AI model can only generate responses based on the parameters of the datasets it was trained on. Therefore, the LLM cannot respond accurately about current events that occurred beyond its training date. Sometimes, an LLM may even “hallucinate” and generate inaccurate information that is not rooted in any source as part of its answers. This is clearly undesirable, as it hampers the reliability of an AI application’s generated responses.

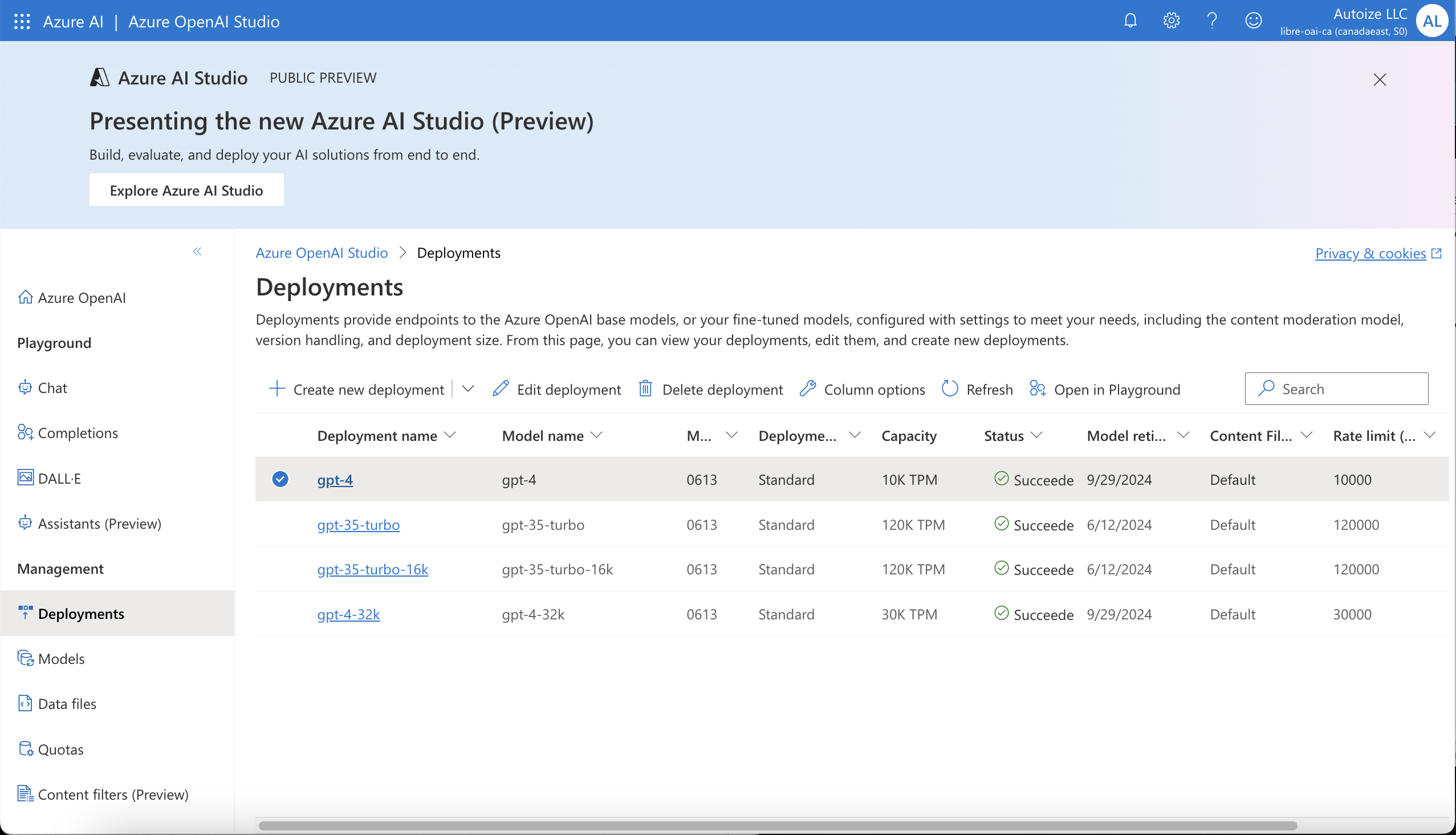

Fortunately, now it is possible to integrate similar retrieval capabilities to ChatGPT Plus into an AI application that you control on your own infrastructure, while meeting data protection and sovereignty requirements. Azure OpenAI Service is a replica of the public OpenAI endpoint on openai.com, hosted in an Azure region of your choice and covered by Microsoft’s terms of service, ensuring that your prompts and data are never used by Microsoft or OpenAI to train their models. As an additional benefit, deploying an OpenAI model on Azure enables AI use cases that require storing and processing sensitive data in compliance with standards such as ISO 27001, HIPAA, and FedRAMP.

If the intended use case of your AI application is narrow enough that you can predict the scope of the questions that users will ask it, then training an open source foundation model such as LLaMA 2 with the specialist knowledge that the AI needs can be a great option. After training an existing model with domain-specific knowledge, you can import that “checkpoint” into an AI as a Service, or deploy and serve it with your own GPUs using a container such as Hugging Face TGI or vLLM.

However if the knowledge your AI needs will more frequently change, Retrieval Augmented Generation, also known as RAG, can be a more appropriate approach than retraining the model. As discussed in our article about RAG using custom embeddings into a vector database, you can chat with your internal documents, such as PDFs, documents, or spreadsheets. This data can even be even streamed into the embedding model through a data pipeline, such as with Airbyte or n8n.

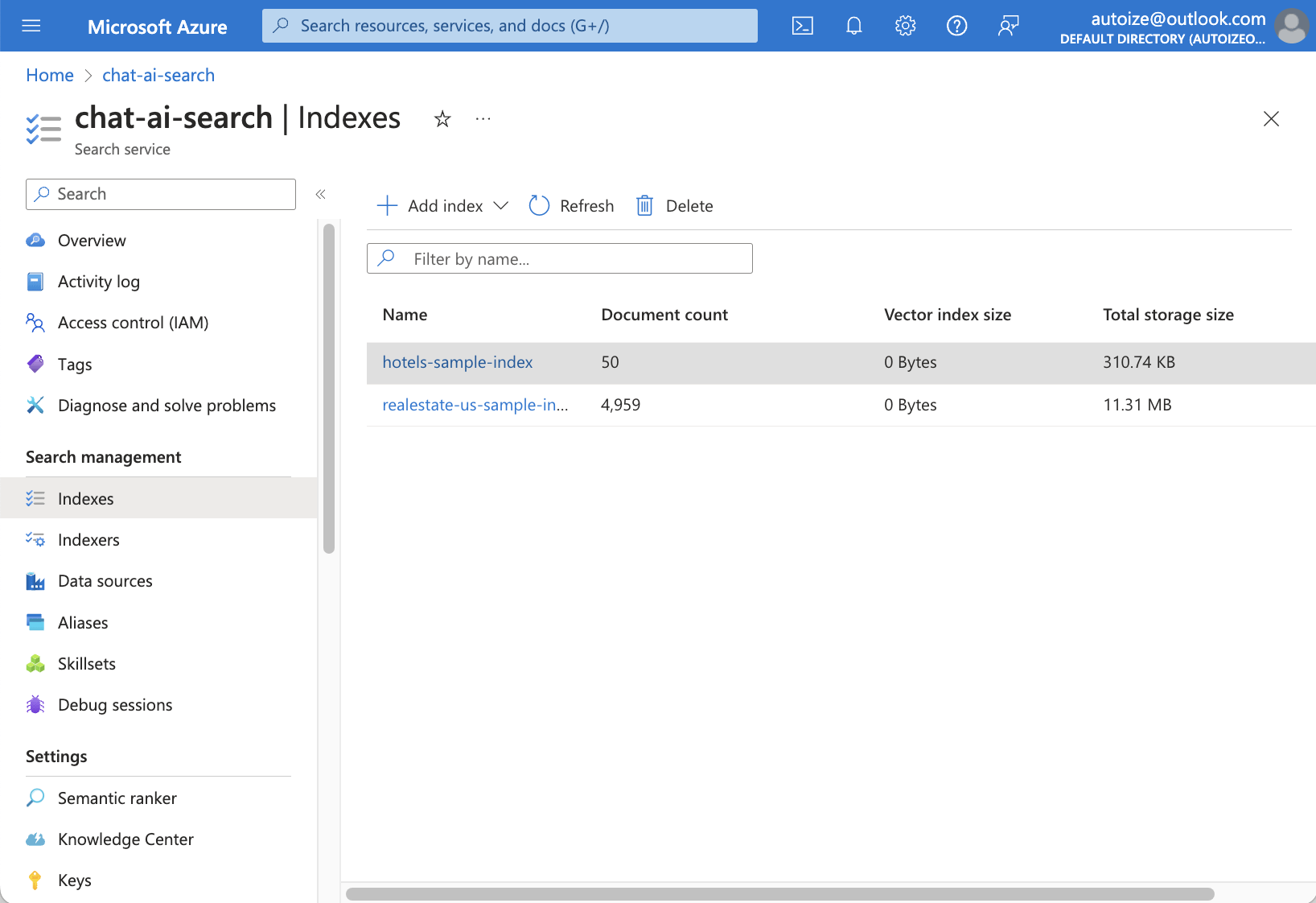

Azure AI Search (formerly Azure Cognitive Search) is a managed service for indexing and searching data from a SQL database, CosmosDB, or other data sources such as content stored on Azure Blob Storage. The indices can be used for RAG using an Azure AI Search plugin in your AI application, so that the LLM can generate answers based on your custom data. Using Azure AI Search for indexing & search can be considered as an alternative to running documents through an embedding model and storing it in your own vector database such as Elasticsearch + MongoDB or Postgres with pgVector.

But what if the data that your AI requires is constantly updated and best found through an Internet search? Instead of unhelpful responses suggesting that the user should perform a search themselves, an LLM can be integrated with a search plugin to retrieve a list of search results and follow those links through APIs such as the Google Custom Search API. By deploying a local ChatGPT-style interface on your own infrastructure, you can combine search engine results with OpenAI or Azure OpenAI Service for inference. We deployed an instance of LibreChat, an open source ChatGPT style interface, with the GPT-3.5 Turbo and GPT-4 models on Azure OpenAI Service.

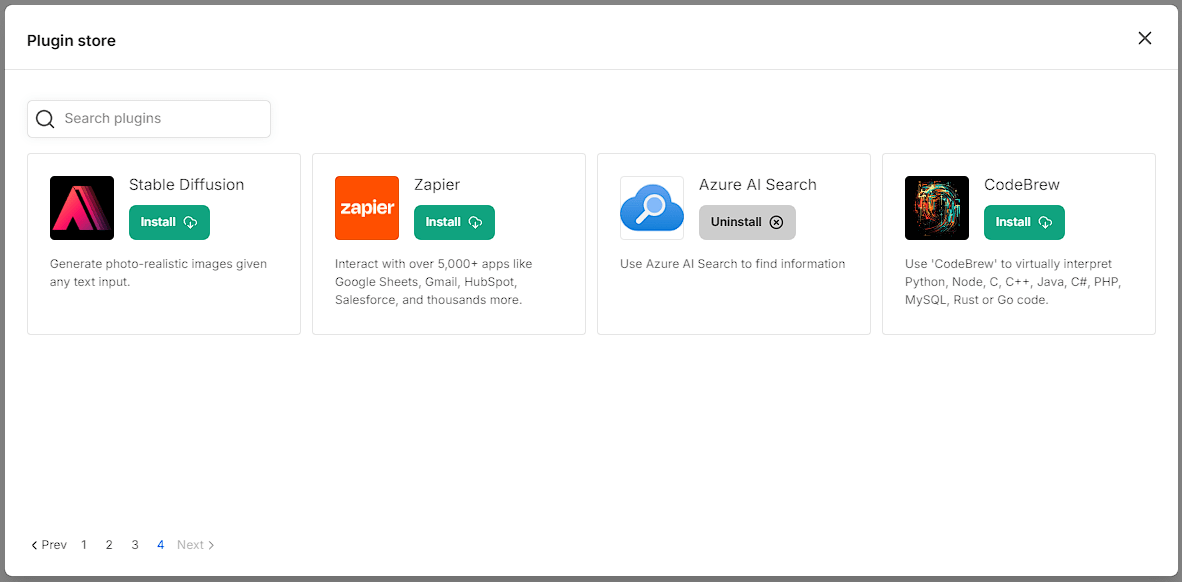

LibreChat offers a Plugin Store where users can select from plugins including Google Search, Azure AI Search, and Browsing to augment the capabilities of any OpenAI model. These plugins can be added from the store, and one (or more) can be enabled for any given prompt. Using LangChain, any prompt that requires an external data source is handed off to a plugin, which looks up information from Google, an Azure AI Search index, or scrapes data from a publicly accessible webpage. The JSON response data from the plugin API becomes part of the input tokens sent to the OpenAI model, enriching the context that the model will infer from when generating its response.

Below, we will consider a few examples of use cases for retrieving information using OpenAI plugins to enrich the large language model’s context. It is better to use an AI model with as large of a context window as possible, such as GPT-3.5-Turbo-16K, so that longer conversations are supported without running out of tokens. Sometimes to respond to certain prompts, the model may even need to retrieve information from multiple sources using one or more plugins, so supporting a large context size will greatly improve the user experience. Note that using the GPT-4-32K model with the maximum input tokens will result in API costs of around $2/prompt, so for most applications, GPT-3.5-Turbo-16K is a significantly more cost effective alternative that provides a large context size as well.

Are you ready to take the next step? Get in touch with our AI infrastructure consultants to discuss how to integrate your data with an AI application, like a ChatGPT-style chatbot for your organization. We can assist you in establishing ELT (Extract-Load-Transform) pipelines to confidentially make the data from your data warehouse/data lake accessible to state-of-the-art LLMs.

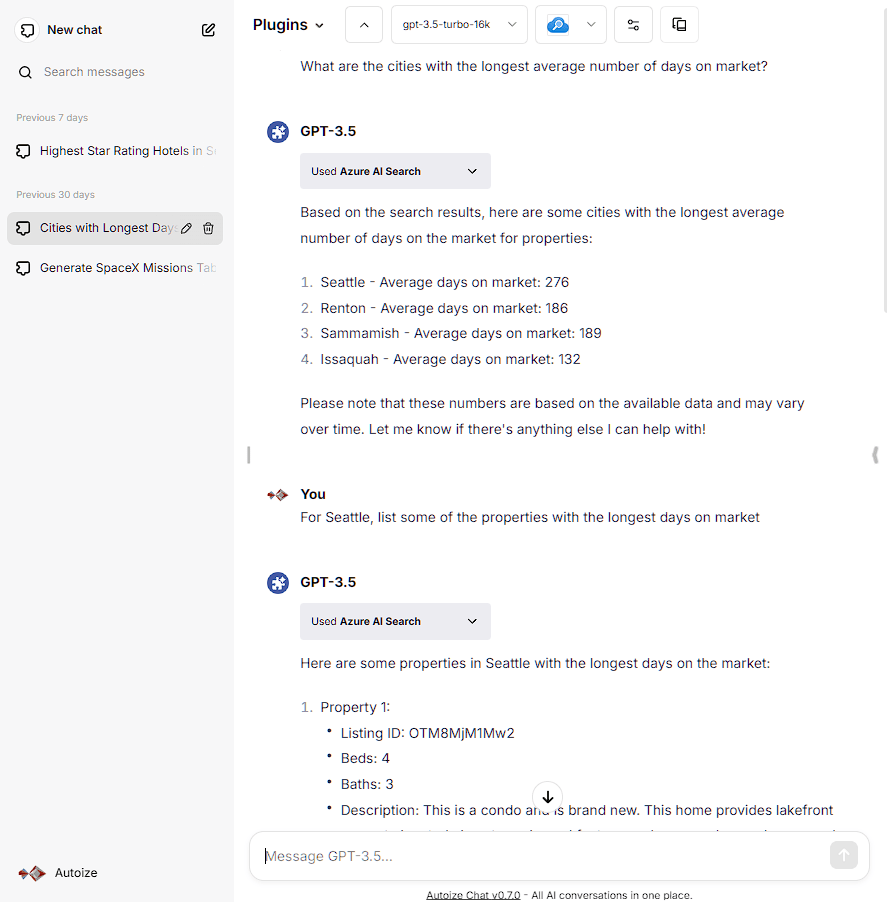

Azure AI Search LibreChat Plugin – “Real Estate US” Sample Index

The Azure AI Search plugin retrieves data from an Azure SQL database of real estate listings to respond to a natural language prompt about the US metropolitan areas with the longest average days on market, and a follow-up prompt to list such properties in Seattle.

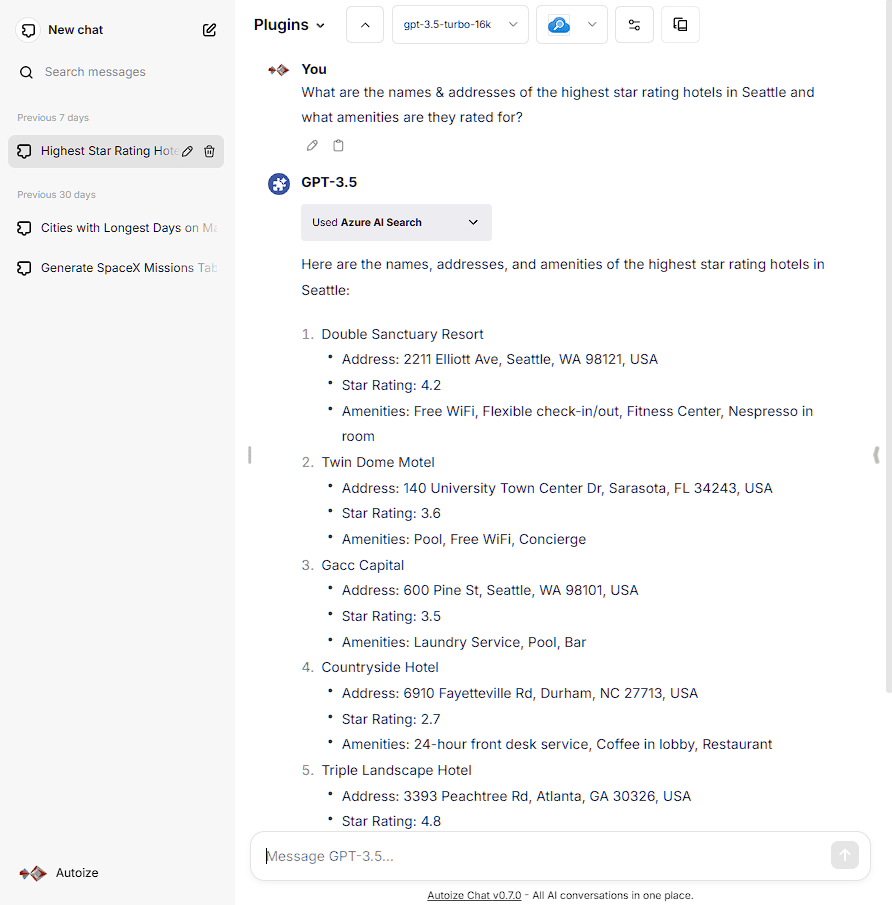

Azure AI Search LibreChat Plugin – “Hotels” Sample Index

The Azure AI Search plugin retrieves data from a Cosmos DB database with a list of hotels and their attributes (star rating, amenities) to respond to a natural language prompt for the highest star rating hotels in Seattle.

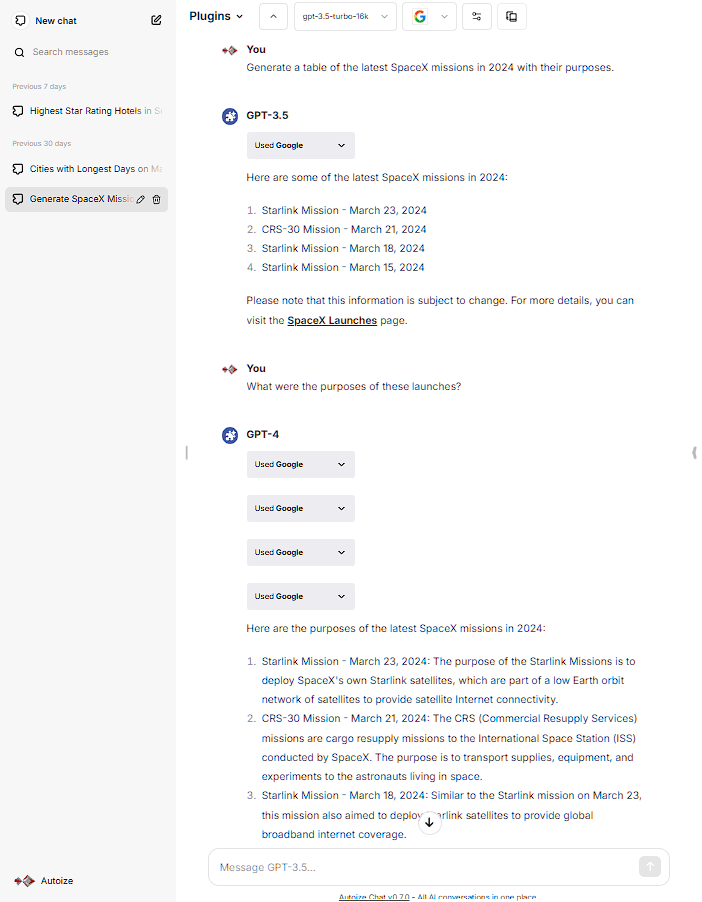

“Google” LibreChat Plugin – List SpaceX Missions in 2024

The Google plugin, using the Google Custom Search API, retrieves a list of search results to list the latest SpaceX launches that happened in 2024, despite the model’s knowledge cut-off being at an earlier date. It also generates a follow-up response to the user inquiring about the purpose of those missions.