For a long time, Portainer has been a Docker Swarm native tool, but that is about to change. Coming in Portainer 2.0, Portainer will support managing Kubernetes clusters from its graphical dashboard. Managed Kubernetes services from popular cloud providers including Amazon EKS and DigitalOcean Managed Kubernetes, in addition to distributions such as minikube, Rancher k3s, and Docker Desktop are supported.

Portainer’s support for a wide range of Kubernetes distributions will see it deployed in dev/test and production environments both on-prem and in the cloud. From the Portainer front-end for Kubernetes, users can create Resource Pools (namespaces), Configurations (env. variables and secrets), and Applications (deployments) with ease.

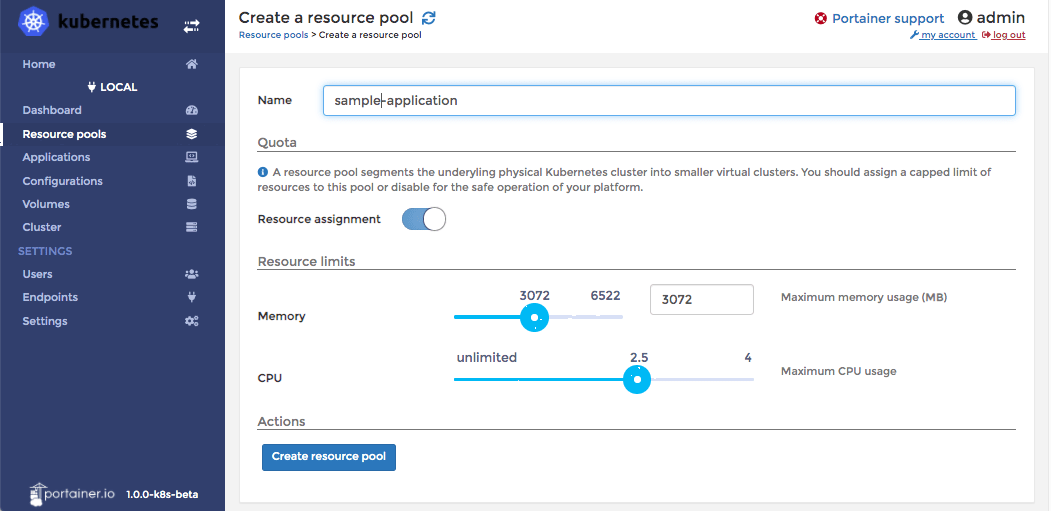

Portainer’s Resource Pools are used to segregate the hardware resources available on the cluster by setting memory and CPU quotas. Configurations are also assigned to a Resource Group, so that sensitive data such as secrets can be easily organized as belonging to a particular project.

A users’ deployments can leverage Kubernetes primitives such as Persistent Volume Claims (persistent volumes) and Load Balancers (ingresses) through an advanced deployment using a YAML file, or a graphical interface. Existing Docker Compose files can even be translated from Compose format to a service definition that Kubernetes can understand, without using a tool outside of Portainer at all.

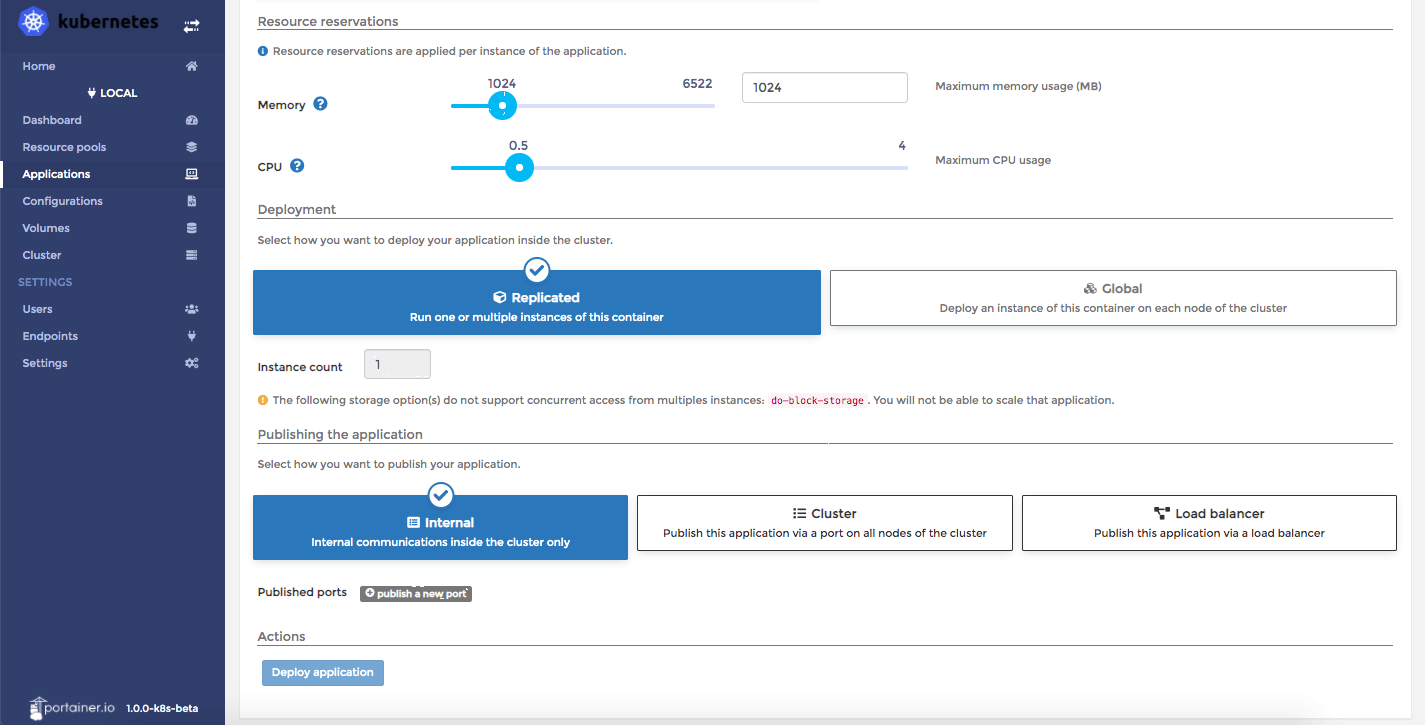

The more complicated Kubernetes concepts such as StatefulSets, Deployments, and DaemonSets have been abstracted away as Portainer will simply ask the user a series of questions that will be familiar to Docker Swarm users. For example, the “Add Application” window asks the user whether they want to deploy a service as Replicated or Global. In Docker Swarm, a replicated service is deployed across any Docker hosts that meet the constraints, up to the number of replicas specified (e.g. an application). A global service is started on any Docker nodes that are added to the Swarm (e.g. a health check container).

The equivalent of a replicated service in Kubernetes is a deployment, and a global service is a DaemonSet. Kubernetes also has the concept of a StatefulSet, which is a service with a particular “state” that should be bound to a pod on a particular host.

An advantage that Kubernetes currently has over Docker Swarm is its ability to leverage block storage services on cloud providers through container storage interface (CSI) drivers, whereas Docker Swarm currently relies on plugins such as REX-Ray to do the same. CSI support is coming to Docker Swarm as well, as it will be open-sourced, even though it is being developed by Drey Erny, the lead developer who has been absorbed into Mirantis. We expect that Docker Swarm and Kubernetes will be roughly at parity in this respect, once the cluster volume support for Swarm is complete.

Portainer for Kubernetes supports mapping volumes in a container to any block storage service currently supported by Kubernetes. When you initialize your Portainer instance on your Kubernetes cluster, you will be prompted to select the access mode for persistent storage for your provider – RWO, ROX, or RWX.

- RWO – Read Write Once – The persistent volume can only be read & written to by one node at a time. For block storage services such as Amazon EBS or DigitalOcean Block Storage, RWO the only supported access mode.

- ROX – Read Only Many – The persistent volume can be read by multiple nodes, but only written to by a single node at a time.

- RWX – Read Write Many – The persistent volume can be read and written to from multiple nodes at a time. Network filesystems such as Amazon EFS or Gluster can support RWX access mode.

A broad range of over cloud and on-prem storage providers have written CSI drivers for Kubernetes, including AWS, Azure, DigitalOcean, Google Cloud, Hetzner and others. If you are using an on-prem storage technology including Ceph, Cinder, Dell EMC, Gluster, Rancher Longhorn, NetApp, Nutanix, OpenEBS, Portworx, StorageOS, vSphere, or Zadara Storage, Kubernetes has also got you covered.

When you deploy applications using Portainer for Kubernetes, you can leverage the LoadBalancer object to use external load balancers such as Amazon ALB or DigitalOcean Load Balancers to route traffic into the containers running on your cluster.

The simplest way to deploy Portainer for Kubernetes automatically provisions an external load balancer through your cloud provider’s API to access the Portainer dashboard over the Internet. A bare metal equivalent of this is also available, through an open source project known as MetalLB. Neil Cresswell, the co-founder of Portainer, discusses here how MetalLB can be used as a load balancer with a local k3s cluster.

If instead, you wanted to use a load balancer or reverse proxy that you manage yourself hosted outside of the Kubernetes cluster, you could deploy Portainer with a NodePort, which exposes Portainer at ports 30777 and 30776 across all of your Kubernetes nodes.

Do we think all Docker Swarm users need to switch to Kubernetes? No, although Portainer for Kubernetes will certainly make it easier for Docker fans accustomed to the ease-of-use of Docker’s developer-focused tooling.

For many teams who adopt Kubernetes, it will most likely be with a managed Kubernetes service such as AKS, GKE, DO Managed Kubernetes, or Linode LKE. Updating Kubernetes versions has always been particularly challenging, not to mention maintaining components such as etcd – a replicated key-value store that maintains state for the Kubernetes master node. With most managed Kubernetes services, the master node is fully managed and you only pay for the worker nodes (minions) – a notable exception is GKE, where master nodes will be billed at $0.10/hour starting in June 2020.

For development and small-scale production purposes, setup scripts like Rancher k3s and arkade by Alex Ellis can be used to spin up a k3s cluster complete with Portainer for Kubernetes with just a few commands.

Currently, Portainer for Kubernetes is in beta. It will debut with Portainer 2.0 and be open sourced at that time. It is already proving to be a very capable Kubernetes management tool, which we tested with DO Managed Kubernetes.

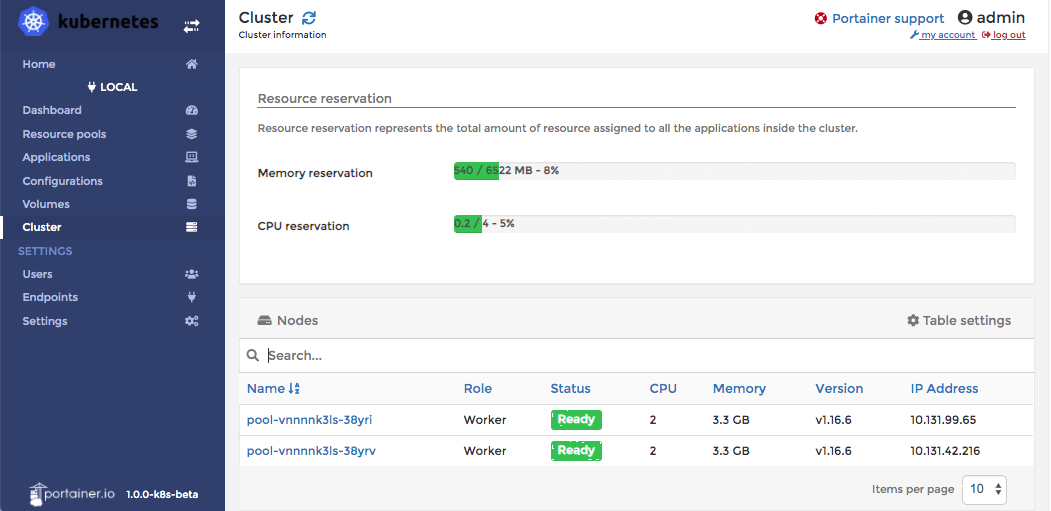

We created a new project in DigitalOcean and spun up a small Kubernetes cluster with 2 nodes (4GB / 2 CPU each). Then, we configured doctl, DO’s command line utility, with an API key with read/write permissions to our account.

It is better to use DO’s automated certificate generation tool through doctl, which will automatically refresh the certificates that kubectl, the Kubernetes client, needs to communicate with your remote cluster. If you choose to download the certificates manually, they will expire after 7 days.

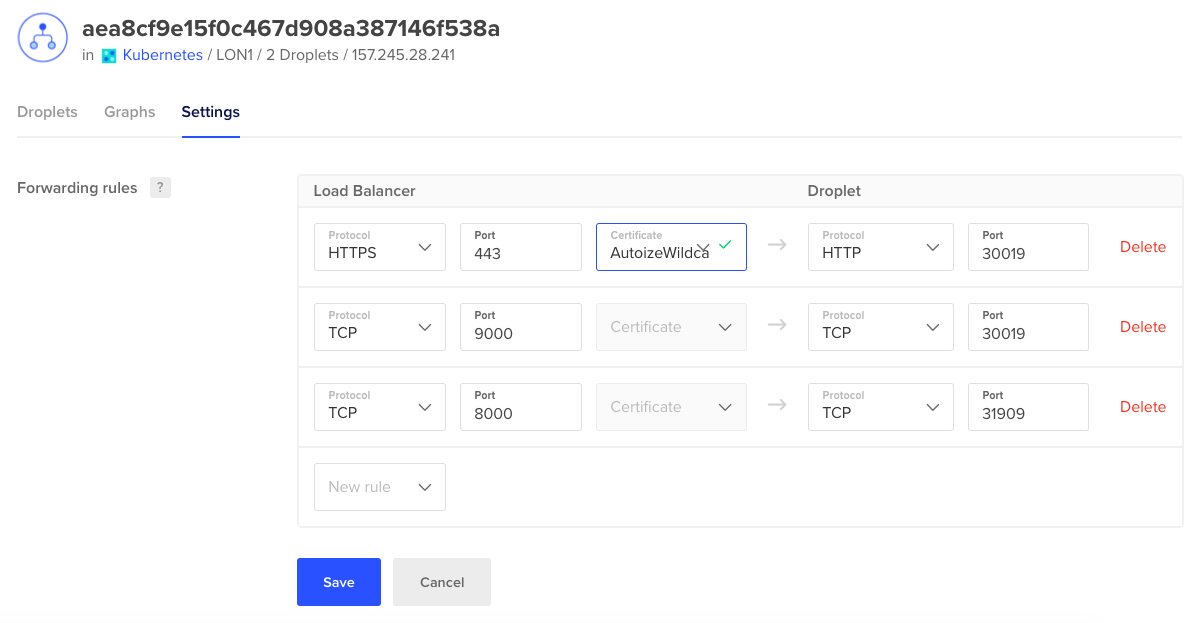

Finally from our laptop, we deployed the Portainer YAML file to our cluster, and Portainer came to life on port 9000 of our DO Load Balancer’s IP.

curl -LO https://raw.githubusercontent.com/portainer/portainer-k8s/master/portainer.yaml kubectl apply -f portainer.yaml

We pointed a hostname via DNS at the load balancer, and configured SSL termination using an existing SSL certificate. If you have your domain’s DNS zone delegated to DO’s nameservers, you could also theoretically use the automated Let’s Encrypt certificate installer.

Try Portainer for Kubernetes yourself today, join the community forum or Slack, or get in touch with one of our cloud consultants about deploying a container cluster on the cloud provider of your choice.