Did you know that your server could unwittingly become part of a “botnet” attacking other servers in a distributed denial of service (DDoS) attack? The increasing adoption of cloud computing services by business users who are not necessarily technical has led to gaping deficiencies in security awareness. In the traditional, on-premise world, most resources sat safely guarded behind the perimeter of the network. Internal users would need to authenticate themselves by VPN to access any services on the LAN. Only a select number of services which are Internet-facing would be placed in a DMZ (De-Militarized Zone) with partitioned off access from the rest of the network. This network architecture gave sysadmins an overarching view of the traffic passing in & out through their NAT gateway and any VPN appliances.

With the advent of the cloud, and a mobile workforce that demands convenient access to work from anywhere, an increasing number of services are being deployed outside of the protective umbrella of the traditional, corporate network. More and more servers are being exposed directly to the Internet, especially with VPS hosting providers that assign a public IP address to every instance by default. While convenient for dev/test workloads and beginner cloud users, many users are inadequately securing the services running on their VPS from the Internet.

Memcached Reflection Attack – Real World Scenario

Recently, a custom kernel provided by a popular cloud provider in the U.S. caused the firewalld service to go down for Fedora and CentOS servers. This inadvertently exposed unauthenticated services such as Memcached, which do not ask for a password by default – and are normally only accessed locally. Any users who were relying solely on firewalld to block access to Memcached were affected.

According to the US Department of Homeland Security and German Federal Office for Information Security, an open Memcached server can be “poisoned” with spoofed UDP requests that are reflected back at the victim of the DDoS attack. The cumulative traffic can reach gigabits (Gbps) or even terabits per second (Tbps), bringing down the attacked service, especially if they’re not using a DDoS protection service.

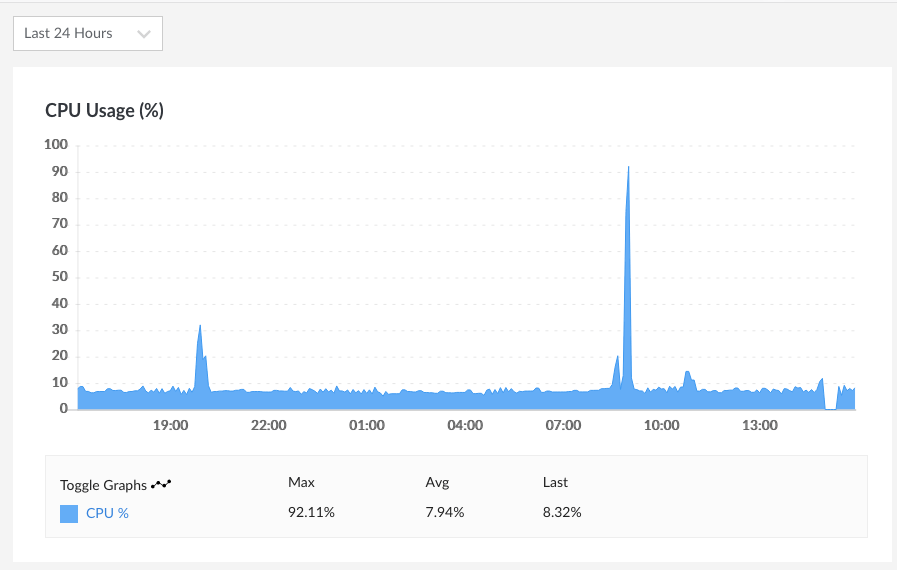

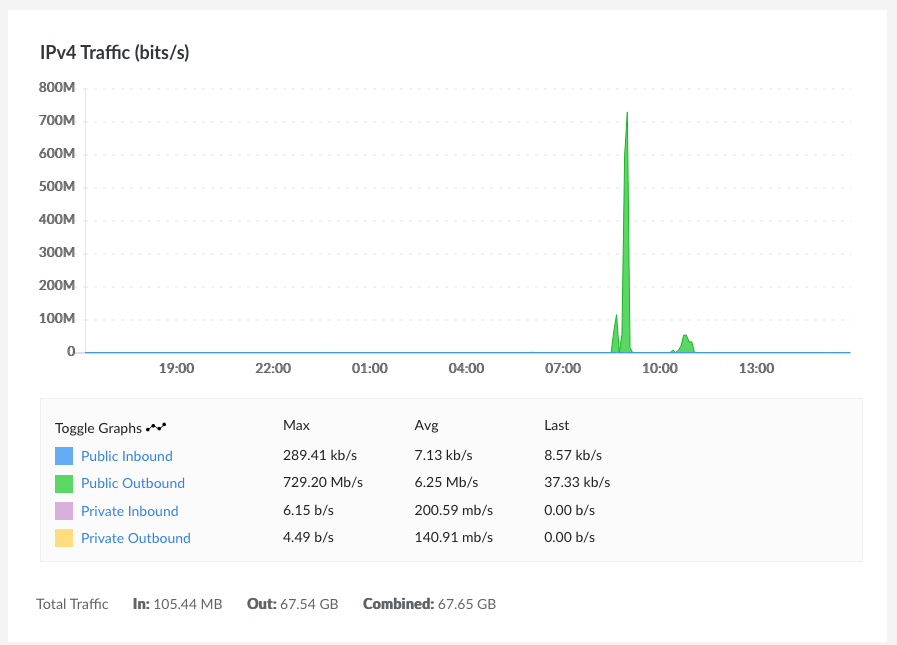

A web server that typically transfers less than 10 GB of traffic a month was gobbling up 50 – 100GB of traffic within a matter of hours. These spikes also pushed the CPU usage above 90%, reaching a peak of 92.11% for the server. The peak amount of data transferred was 729.20 Mb/s. The attackers were unrelentless, repeatedly using the server for attacks multiple times daily (not pictured).

The particular cloud provider in question does not block the default Memcached port 12111 (UDP) as competitors such as DigitalOcean have done, so large volumes of traffic was exiting their network, and potentially being used for a DDoS reflection attack.

Not only can a compromised Memcached server potentially cause costly downtime of critical services, it can result in unauthorized access to session data stored in Memcached, and it also negatively affects other networks on the Internet – particularly that of the DDoS attack victim.

A telltale sign is a sudden high volume of outbound network traffic, especially UDP traffic. If you suspect your server has been compromised to perform a DDoS reflection attack, contact our incident response team for assistance with a) isolating the source of the attack b) closing the vulnerability to prevent further attack. Finding which service is being used for a DDoS reflection attack is not always easy, especially since UDP traffic is not recorded in a web server’s logs.

Other common services that can be compromised to perform a UDP-based DDoS reflection attack include improperly configured DNS, NTP, SNMPv2, RPC, NetBIOS, LDAP, and TFTP servers.

If you are on the receiving end of a DDoS attack, we can also assist you in implementing DDoS protection and/or web application firewall to mitigate the attack, and reconfiguring your server so that it only accepts authenticated connections from the protection provider’s IP ranges.

Reconfiguring your network to take advantage of third-party DDoS protection can be complex, and any DNS changes must be carefully executed so it results in minimal or no downtime to critical services such as corporate websites, web applications, and email.

Are your cloud servers potentially vulnerable?

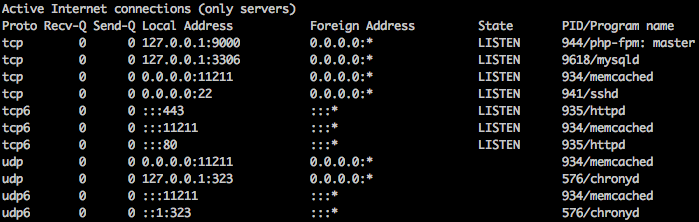

Using the sudo netstat -plunt command on Linux, you can see a list of services on your system, the addresses, and the ports that they are listening on. If there are any services that you don’t recognize, particularly services listening with a local address of 0.0.0.0 (all adapters) instead of 127.0.0.1 (localhost), contact one of our security professionals who can help you harden your system.

Enterprise Cloud vs Independent Cloud Provider Security

Enterprise Clouds – AWS, Azure, Oracle Cloud

For an instance to have two-way (ingress and egress) Internet connectivity on an enterprise-oriented cloud such as AWS, Azure, or Oracle Cloud, these requirements must be fulfilled in general:

- Ports need to be explicitly opened to the Internet by specifying security group (AWS, Azure) or security list (Oracle Cloud) rules.

- An Internet Gateway must be attached to the VPC (AWS), Virtual Network (Azure), or VCN (Oracle Cloud).

- The compute instance must be assigned a publicly routable IP address, and reside in a public subnet.

- A route table needs to be in place to route all traffic destined for the Internet to the Internet Gateway (IGW).

If an instance only requires egress Internet connectivity, for example to download updates & patches, a NAT gateway can be used in place of an Internet Gateway, and the instance can reside in a private (as opposed to a public subnet). The instance also doesn’t require, and in fact, can’t be assigned a public IP address.

Because the enterprise-oriented clouds are aimed at professional system architects and administrators, most of their users consider it a strength that you have to “jump through a number of hoops” to expose an instance to the Internet. In a larger organization where you have many engineers and sysadmins working together, the numerous safeguards help prevent inadvertently making a private resource accessible by the public.

Independent Cloud Providers – DigitalOcean, Linode, other VPS hosts

Not so with other clouds that prize themselves on their simplicity and ease-of-use. With smaller players like DigitalOcean or Linode, it’s assumed that you want every port to be open to the Internet – unless you specify otherwise. Most web servers and API endpoints only require SSH, HTTP, and HTTPS access at the most. For internal servers such as DB servers, it’s preferable to access them exclusively over the internal network. Leaving additional ports open is unnecessary, and potentially exposes your servers to attack, or being co-opted in an attack against other networks.

Security Tips for Cloud Servers & Architecting Cloud Networks

Here are some security recommendations from our cloud experts for VPS users.

- Plan your cloud architecture according to the principle of least privilege. For example, DB servers should only be accessible to application servers that need to read/write from the database.

- Consider using a load balancer, such as HAProxy or a managed load balancer, to limit incoming traffic to your Internet-facing servers. The load balancer only proxies traffic on the configured ports through to your web servers and applications, acting as an additional line of defense. The backend servers should be configured to accept traffic only from the load balancer.

- Disable IPv6 connectivity to your instance if you are exclusively using IPv4. Otherwise, ensure an IPv6 firewall is configured. With firewalld and ufw, both IPv4 and IPv6 rules can be configured using the same utility. If using iptables, ensure you have configured ip6tables rules as well.

- Bind services such as database or caching servers only to localhost, or the local network adapter (e.g. eth1) – if internal network access is required. Avoid binding services to all adapters, which includes the public network adapter (e.g. eth0).

- Configure user authentication at the application level based on least privilege. For example, set a Redis password instead of going with the default – no authentication. For MySQL and MariaDB, make sure you ran the mysql_secure_installation script to set a secure root password, and disallow root logins remotely. Database-driven applications should each have an individual database user, with granted privileges appropriate to the application.

- Even if every instance is assigned a public IP address, it doesn’t mean you have to use it. Leverage the internal networking service so as much traffic as possible remains physically in the provider datacenter (and logically confined to your account).

- Where available, use cloud firewalls to control traffic to instances and instance groups (based on tags) in conjunction with software-based firewalls. Cloud firewalls are similar to security groups, as they do not rely on a locally running firewall service, such as iptables.

- Consider using a bastion host to access instances over SSH, and use SSH public keys (instead of passwords) to authenticate to the bastion host.

- Keep all Linux packages up to date by regularly running the update command in your package manager (e.g. apt, yum, or dnf) and consider setting up cron-apt or yum-cron for automatic security updates.