Control over one’s data and potential cost savings are the most popular reasons to build a “private storage cloud” using a NAS or home server, instead of relying on services like Dropbox or Google Drive. However, a single server in your basement does not a “cloud” make. Commercial cloud storage services employ multiple layers of redundancy that make it virtually impossible to lose your data from a single hard drive failure. These measures typically include RAID-backed storage volumes, redundancy of physical hosts across multiple datacenters, and off-site backups. With the right architecture, you can also achieve a similar degree of protection for your self-hosted file sync & share solution – especially if you use hybrid/public cloud as part of your deployment strategy.

Best RAID Levels for Cloud Storage Deployments in Various Environments

The simplest way to protect your self-hosted cloud storage from hard drive failure is to ensure the volume where you store your user data & database resides on a RAID 1, 5 or 10 array, instead of a single hard drive. Depending where you host your own instance of NextCloud, or Seafile or what type of NAS device you use, you may be more (or less) exposed to this risk than you realize.

VPS or Cloud Server

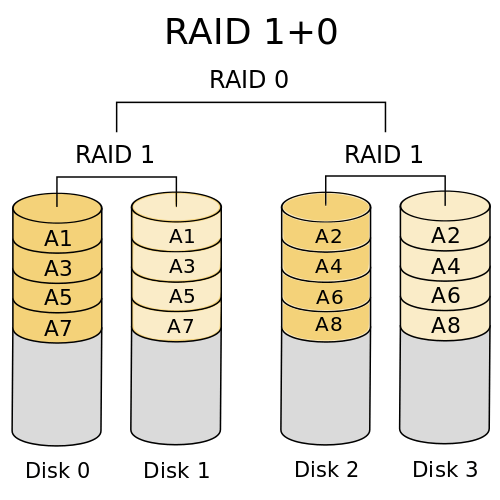

The industry standard for VPS or cloud servers is RAID 10 (striped mirror) block storage — which is a combination of RAID 1 + 0. This RAID level requires a minimum of 4 physical disks, but is usually implemented with larger servers than that in the real-world.

The industry standard for VPS or cloud servers is RAID 10 (striped mirror) block storage — which is a combination of RAID 1 + 0. This RAID level requires a minimum of 4 physical disks, but is usually implemented with larger servers than that in the real-world.

Any writes are mirrored across two disks in what’s known as a “subset.” As long as both disks in a given subset don’t fail at the same time, the array can tolerate multiple drive failures without losing data. Most industrial-scale hosting operations prefer RAID 10 over RAID 5 as:

- Write performance is up to 65% higher with no parity information to calculate

- Risk of data loss does not increase with the number of disks in the array

- Recovering from a failure is fast, as the data is copied block-for-block from the mirror, similar to RAID 1

In the event both disks in the same subset fail simultaneously or the RAID controller quits working, most major cloud providers have a redundant copy of your data on a server in a separate datacenter entirely. Their teams of engineers will probably recover it behind-the-scenes without you even realizing it, or suffering any downtime.

Dedicated or Co-Located Server

Some lower-end, budget dedicated servers are configured in JBOD or RAID 0 mode by default. In these configurations, you can use the entire storage capacity of the hard drives — at the cost of having no redundancy. If one of the hard drives fail, you will lose some or all of your data. Here are some alternatives:

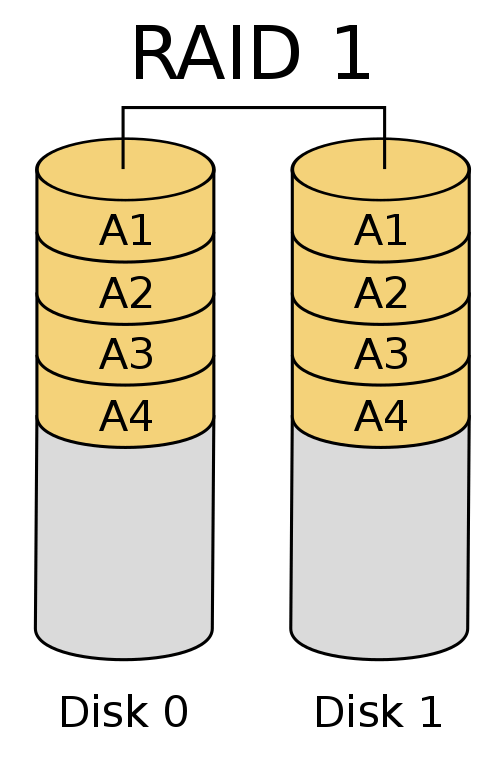

If your server has only two physical disks, you could reformat them as a RAID 1 array (mirrored), but only 50% of the total capacity will be usable. Any data written to the array will be streamed directly to both disks, without the performance overhead of calculating parity information. If one of the disks fail, you simply swap in a replacement and rebuild the RAID array,

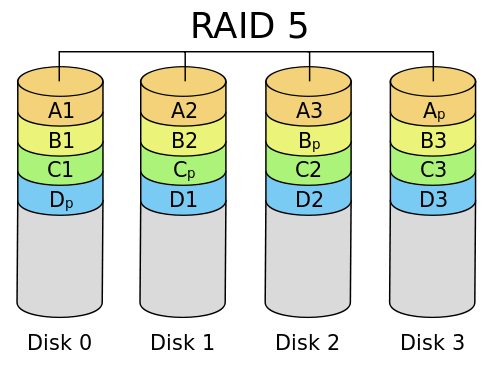

If you have 3 or more disks, you could set up a RAID 5 array (striping with parity) where “n-1” disks will be your usable capacity, i.e. 66% usable with 3 disks, 75% with 4 disks, and so on. The main drawback of this configuration is the hit in write performance, as the data is made redundant by calculating and striping parity information across the disks, instead of a mirror.

If you have 3 or more disks, you could set up a RAID 5 array (striping with parity) where “n-1” disks will be your usable capacity, i.e. 66% usable with 3 disks, 75% with 4 disks, and so on. The main drawback of this configuration is the hit in write performance, as the data is made redundant by calculating and striping parity information across the disks, instead of a mirror.

On-Premise NAS or Server

If you have a 1-bay NAS device, such as the WD My Cloud, Seagate Personal Cloud, or entry-level Synology, QNAP or NetGear models – look out. You could lose your data anytime if the hard drive does (and will) eventually fail. These consumer or home office NAS should only be used to store non-critical data such as music or movies, or in conjunction with a NAS-to-NAS replication setup.

If you have a 2-or-4-bay NAS, desktop, or 1U microserver with up to four installed disks, your best bets will be to use RAID 1, 5, or 10 to provide redundancy for the stored data – with the same considerations/tradeoffs as the dedicated server scenario above. It is also important to consider what types of HDDs you have installed in your server. Most consumer hard disks are not designed to spin continuously 24/7, which can lead to premature failure if used in a NAS. Instead, take a look at NAS hard drives such as WD Red, Seagate NAS, HGST Deskstar NAS or enterprise NAS drives designed for continual read/write usage. These upgraded drives incorporate firmware features such as TLER (Time Limited Error Recovery), vibration protection, and longer head parking times that reduce the physical wear-and-tear on the drive’s components.

For larger NAS such as a 6 or 8-bay unit, or a 2U/4U server chassis that supports 8 HDDs or more, RAID 10 is almost certainly the way to go instead of RAID 5. The more drives you add to a RAID 5 array, the greater the chance two (or more) could fail at the same time, and wipe out your data. RAID 5 is simply not worth the incremental amount of additional usable capacity, when RAID 10 has a much smaller performance penalty on disk I/O speeds, along with greater reliability and quicker recovery from a drive failure. If you are building a storage cluster for an enterprise environment, you should also consider using a ZFS file system with Error Correcting-Code (ECC) memory, which prevents data corruption (i.e. flipped bits) from memory errors during operation.

Backup, Snapshotting & Real-Time Replication Options

It’s essential to remember the mantra that “RAID is not backup.” If the location where you house your server were to suffer a fire or flood, or the equipment is physically stolen, RAID is a moot point. Also, RAID can do nothing to protect you from human error or a malicious user, if for example, you accidentally deleted a file, a disgruntled employee decided to sabotage your data, or ransomware infected your file server encrypting all of its files.

The best way to protect against a physical disaster or unrecoverable RAID failure is real-time replication, where data is replicated over to a backup server in a separate location as soon as its written to the primary server. One of the file sync & share applications we recommend to our clients, Seafile Professional Edition, has a high-performance delta syncing algorithm which can mirror terabytes of data between two locations over an TLS encrypted Gigabit connection.

The major advantage of real-time replication as opposed to snapshot-based backups is achieving a near-zero Recovery Point Objective (RPO). If the primary server fails, the only data you will lose is the most recently written data which hasn’t yet been replicated to the backup instance — this will be minimal in most cases, so long as you have a high-speed connection between the two sites.

However, snapshots may be beneficial in conjunction with real-time replication, particularly if you haven’t enabled “versioning” within the application to automatically retain older versions of data for a set period of time. rsync or commercial backup tools can be used to automate incremental backups of your data on a daily or weekly basis, allowing rollback to a previous point in time if the files are damaged or deleted on the storage server. The backups should ideally be taken over the LAN to ensure they can be completed within a reasonable time, then archived to an off-site location if desired. ZFS or btrfs snapshots based on the respective copy-on-write (CoW) file systems can also be worth considering as an alternative if the volume of data is particularly large.

If you only have one business location, we suggest adopting a hybrid cloud strategy where you backup and/or replicate your data to a public cloud provider, or a dedicated server [cluster] in a commercial datacenter. As long as your use case permits it, there are ways to mitigate the privacy you give up by entrusting your data to a third-party — such as encrypting the data with a strong cipher before uploading it to the cloud.

Disaster Recovery Planning for Cloud Storage Systems

Regardless of which cloud storage or file sync & share solution you choose, you should carefully consider the redundancy of your data in both the server itself, and to an off-site location. Managing your own data, or the data of your organization, is a huge responsibility which shouldn’t be taken lightly. If you are already using an application such as NextCloud or Seafile to provide a Dropbox-like service within your organization, you should review your data protection plan to ensure you can recover from a disaster scenario. Likewise, if you are thinking about adopting a new storage application, you should consider backup and replication as part of your implementation plan. Our infrastructure consultants would be pleased to discuss your specific needs – please contact our team today.